TL;DR: Boost AI Agent Results in Startup Workflows (2026)

Future-focused AI agents have the potential to revolutionize personal and business tasks, but they still face challenges like inaccurate outputs, security risks, and poor contextual memory. To improve performance:

• Build systems like modular scaffolding for improved workflows.

• Pair them with human oversight as in Bright Data's framework.

• Tune prompts for better results with guides like OneReach's instructions.

• Improve contextual interactions by integrating memory layers.

Ready to make AI an effective business tool? Check out AI agents for startup founders to learn which tools align with your goals.

Check out other fresh news that you might like:

What Are Display Ads & How Do They Work?

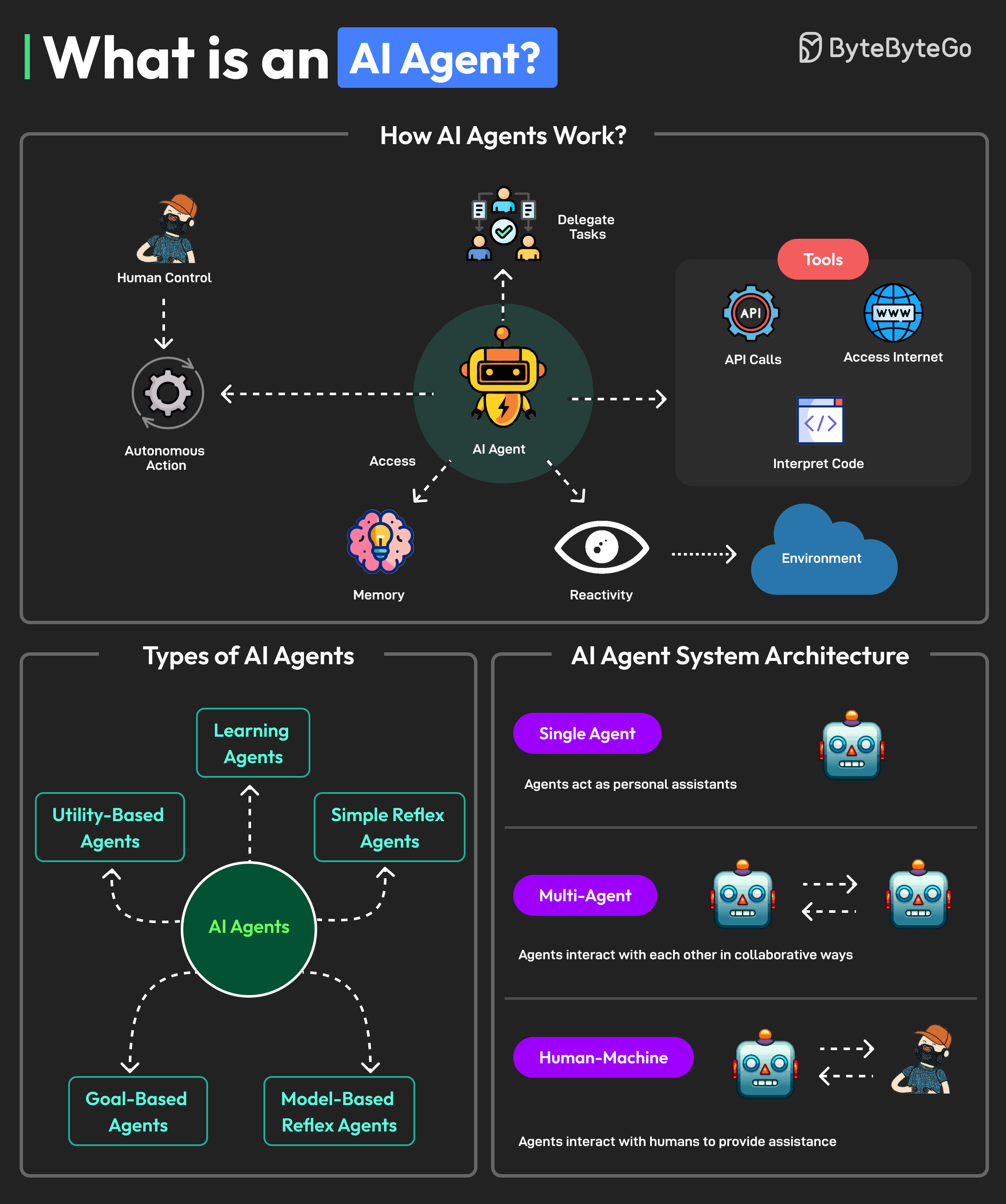

Artificial Intelligence (AI) agents are evolving rapidly, and by 2026, their applications are spreading across industries, from enterprise solutions to daily personal assistants. But despite their groundbreaking advancements, improving these systems remains a challenge. As someone who’s spent years building AI tools integrated into entrepreneurial workflows, let me share how you can supercharge AI agents to deliver results that truly matter.

What are the Key Challenges When It Comes to AI Agents in 2026?

Despite their growth, AI agents suffer from gaps in autonomy, decision-making accuracy, security, and contextual understanding. Let me cut through the noise and identify common pain points:

- Hallucination: Agents can confidently provide incorrect information, working against user objectives.

- Scalability dilemmas: Handling increased data loads or integrating new APIs often leads to breakdowns.

- Security vulnerabilities: Inadequate compliance setups expose sensitive systems to potential risks.

- Tool confusion: Multi-tool workflows frequently collapse due to poor orchestration.

- Lack of contextual memory: Agents fail to utilize past actions or conversations meaningfully.

For context, AI giants like OpenAI and Google are reshaping models, but gaps persist, particularly for smaller organizations attempting to deploy these technologies effectively.

How Can You Boost the Performance of AI Agents?

Improving AI agents isn’t just about “better models.” It’s about designing systems adapted to user goals and environments. These methods have worked for me, and they can work for you, whether you’re automating startup workflows or deploying enterprise solutions:

- Create strong AI scaffolding: Use modular designs like FactFlux in Bright Data’s AI roadmap, which orchestrates multiple tools and memory layers.

- Position human-in-the-loop checks: Adopt hybrid operating models like those described in CloudKeeper’s Agentic Trends report. Humans handle exception tasks while AI takes charge of repeatable workloads.

- Optimize system instructions: Bad prompts equal bad outcomes. Iterative tuning of system instructions, supported by resources like the Enterprise Guide 2026 from OneReach, keeps agents responsive.

- Integrate flexible memory layers: Examples like Google’s Vertex AI memory solutions demonstrate how short-term and long-term memory strengthen contextual engagement.

- Test and grade continually: Develop scenario-based test suites with weighted scoring rubrics for output performance. This is your foundation for feedback loops similar to Zapier’s model.

My rule of thumb is simple: Always iterate. At Fe/male Switch, we treat AI agents like digital teammates. That means demanding review cycles, detailed feedback forms, and strict changelogs, not some “deploy and forget” mindset.

What Are the Biggest Mistakes to Avoid?

- Narrow focus: Bots tuned for single tasks cannot scale across dynamic requirements.

- Skipping security: Poor data encryption often results in compliance failures. Adopt frameworks such as LangGraph and AutoGen that integrate compliance-first processes.

- Ignoring memory: Context-free interactions leave users frustrated, especially for long, multi-turn workflows.

- Over-promising: Agents are tools, not magic wands. Overstating performance creates user distrust.

- Under-testing: Deploy without pilot programs, and you risk failure. Start with controlled environments as per Netcom Learning’s guide to building from scratch.

There’s no shortcut here. Comprehensive validation workflows save time and money down the line.

Detailed Performance Strategy

When designing improvement processes for AI agents, break workflows into clear phases. These are steps I’ve used successfully:

- Version Control: Retain full development logs with semantic versioning (e.g., 2.1.0).

- Scenario-Specific Validation: Gather diverse real-world samples before deployment.

- Feedback Loop: Implement weekly reviews, user surveys, and heatmap tools for live feedback.

- Gradual Scaling: Test workflows incrementally against larger datasets.

- Security Buffers: Embed API logs, traceability, and encryption inside tool deployment configurations.

When onboarding new team members, I insist on user training that emphasizes these strategies. Engineers, designers, and founders must partner with the agent, not treat it as just another tool.

The Future of AI Agents: Niche, Multi-Agent Systems, and Responsibility

By 2026, AI agents are not optional, they’re vital, like electricity for startups. But their future also introduces overload. Instead of “super agents” trying to tackle everything, we’ll see modular, multi-agent systems communicating seamlessly. For example, distributed agents handling specific tasks across engineering, customer support, and operations.

Entrepreneurs managing these systems must remain conscious of ethics. AI agents can supercharge productivity, but their misuse magnifies risks. My guiding principle: humans stay in control, with technology acting as scaffolding.

Final Takeaway: Iteration, Control, and Vision

Improving AI agents is a relentless process of iteration and user-centric design. Whether you’re a CEO of a large-scale enterprise or a founder running a scrappy startup, success depends on tight validation loops, actionable feedback, and scalable frameworks that adapt to user demands.

Ready to equip your startup with AI-driven tools that align with your workflow? Browse frameworks like Bright Data’s roadmap and existing resources to pick modular solutions tailored to your infrastructure.

“Don’t use AI to replace your decisions. Use it to amplify them.” , Violetta Bonenkamp

FAQ on Improving AI Agents in 2026

What are the key challenges in adopting AI agents in startups?

Adopting AI agents poses hurdles like decision-making inaccuracies, scalability limitations, and security concerns. Tailored implementation focusing on autonomy and contextual understanding can mitigate these issues. Explore AI Automations For Startups | 2026 EDITION.

How can startups enhance AI agent-based workflows?

Startups should refine processes through iterative testing, building feedback loops, and leveraging modular AI systems, such as Google Vertex AI's memory solutions. Discover top AI agents for startups.

Are multi-agent systems the future of AI adoption?

Yes, AI adoption is shifting toward multi-agent systems that distribute tasks efficiently across operations like customer support and marketing. This modular approach enhances scalability and functionality. Learn more about Moltbook for multi-agent collaboration.

What are hybrid AI operational models, and why are they useful?

Hybrid operational models blend AI with human oversight, ensuring exception handling for critical tasks while AI handles repeatable workflows, ensuring accuracy and reducing errors. Dive into practical hybrid AI strategies for startups.

How do security concerns impact AI agent deployment?

Inadequate compliance setups expose systems to vulnerabilities. Adopting frameworks like LangGraph and prioritizing secure APIs can strengthen defenses, mitigating risks. Understand security benchmarks for AI agents.

How can AI agents' performance be evaluated post-deployment?

Implement scenario-based test suites with metrics like success rates and feedback aggregation for continuous performance refinement. Tools like Zapier's weighted scoring templates can simplify this. Check out comprehensive validation strategies.

What role does contextual memory play in AI agent efficiency?

Enhanced memory layers, such as Google's Vertex AI, foster better contextual interactions, enabling agents to recall past actions. This boosts user experience in multi-step workflows. Explore memory layers with Google Vertex AI.

What pitfalls should be avoided when deploying AI agents?

Common pitfalls include over-reliance on AI, ignoring memory in multi-turn interactions, and neglecting security protocols. To succeed, startups should emphasize user training and data governance. Uncover common mistakes in AI integration.

How can AI agents impact startup marketing strategies?

AI agents enable cost-efficient automation in campaigns, from personalized email sequences to social media engagement. Adopting tools like LLMs can amplify content workflows. Leverage AI for startup marketing automation.

How does scalability affect AI agents in startups?

Scalability ensures AI agents adapt to dynamic data loads and workflows. Enterprises must integrate modular architectures and flexibility for growth. Maximize scalability for your startup.

About the Author

Violetta Bonenkamp, also known as MeanCEO, is an experienced startup founder with an impressive educational background including an MBA and four other higher education degrees. She has over 20 years of work experience across multiple countries, including 5 years as a solopreneur and serial entrepreneur. Throughout her startup experience she has applied for multiple startup grants at the EU level, in the Netherlands and Malta, and her startups received quite a few of those. She’s been living, studying and working in many countries around the globe and her extensive multicultural experience has influenced her immensely.

Violetta is a true multiple specialist who has built expertise in Linguistics, Education, Business Management, Blockchain, Entrepreneurship, Intellectual Property, Game Design, AI, SEO, Digital Marketing, cyber security and zero code automations. Her extensive educational journey includes a Master of Arts in Linguistics and Education, an Advanced Master in Linguistics from Belgium (2006-2007), an MBA from Blekinge Institute of Technology in Sweden (2006-2008), and an Erasmus Mundus joint program European Master of Higher Education from universities in Norway, Finland, and Portugal (2009).

She is the founder of Fe/male Switch, a startup game that encourages women to enter STEM fields, and also leads CADChain, and multiple other projects like the Directory of 1,000 Startup Cities with a proprietary MeanCEO Index that ranks cities for female entrepreneurs. Violetta created the “gamepreneurship” methodology, which forms the scientific basis of her startup game. She also builds a lot of SEO tools for startups. Her achievements include being named one of the top 100 women in Europe by EU Startups in 2022 and being nominated for Impact Person of the year at the Dutch Blockchain Week. She is an author with Sifted and a speaker at different Universities. Recently she published a book on Startup Idea Validation the right way: from zero to first customers and beyond, launched a Directory of 1,500+ websites for startups to list themselves in order to gain traction and build backlinks and is building MELA AI to help local restaurants in Malta get more visibility online.

For the past several years Violetta has been living between the Netherlands and Malta, while also regularly traveling to different destinations around the globe, usually due to her entrepreneurial activities. This has led her to start writing about different locations and amenities from the point of view of an entrepreneur. Here’s her recent article about the best hotels in Italy to work from.