TL;DR: Human memory works through a two-stage hippocampal-neocortical system that encodes, consolidates during sleep, and reconstructs rather than plays back memories. Current AI memory systems face three hard problems: context window limits, catastrophic forgetting, and the cost of persistent memory at scale. In April 2026, actress Milla Jovovich and developer Ben Sigman released MemPalace, a free open-source AI memory system that borrows the ancient Greek method of loci to organize AI conversations into navigable structures, scoring 96.6% on the LongMemEval benchmark. For bootstrapped European startups, this matters because memory-optimized AI systems typically reduce API costs by 30 to 60 percent while dramatically improving the quality of AI-assisted work.

Here is a claim that will bother you: the AI tools your startup depends on every day forget (almost) everything about you the moment you close the tab. Every context you built, every decision you explained, every preference you set, gone. And while you waste time re-explaining yourself to a machine, your competitors are building systems that actually remember.

If you are into AI, you probably follow some of the projects that try to solve the memory issues. Claude skills is one solution, even though that’s only a small part of the problem, as I will explain in this article. When my team was building PayPals, AI co-founders for entrepreneurs, we tackled the memory issue and it worked quite ok (we built personalization and skills way before OpenAI and Anthropic released these features).

Then, there’s of course OpenClaw that is using heartbeat.md and persistent sessions that preserve the conversation history. Check it out in my OpenClaw for Startups research. It’s fascinating, but rather technical.

This article takes a more science-based approach and gives you the neuroscience behind why humans remember things the way they do, the current state of AI memory research including its real bottlenecks, the tools you can grab today for free, and practical steps to stop losing knowledge across your startup.

If you run a bootstrapped venture in Europe, where every euro of wasted effort counts, this is directly relevant to your bottom line.

And it’s simply a super interesting topic to get into.

The Shocking Truth About How Humans Actually Remember Things

If you don’t know science, everything is a miracle.

Most people believe memory works like a camera recording a video. Neuroscience says otherwise, and understanding the difference will change how you think about your own learning and your AI tools.

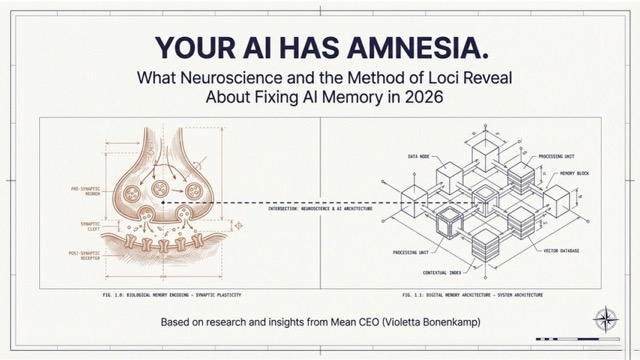

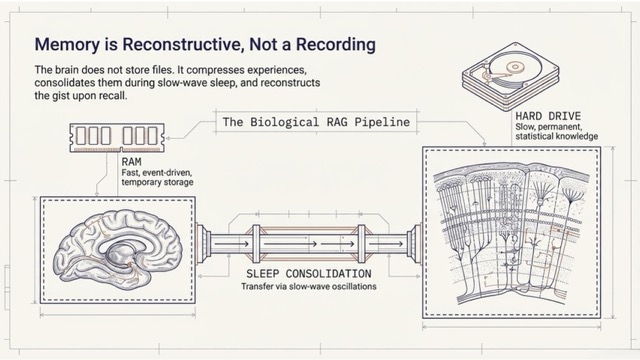

The human brain does not store memories as files. According to research published in Frontiers in Computational Neuroscience, new experiences are initially encoded in the hippocampus as rapid associative patterns and then gradually consolidated into the neocortex over time, often during sleep. What you experience as “remembering” is actually a reconstruction, not a replay.

Here is why this matters.

The Hippocampus as Rapid Encoder

The hippocampus, a seahorse-shaped structure deep in the medial temporal lobe, acts as a fast-write storage system for new episodic information. Think of it as RAM in your laptop, fast, temporary, and essential for working. The neocortex, by contrast, functions like your hard drive: slower to write but more permanent.

What the hippocampus encodes is not the full event. Researchers at the University of Edinburgh working on the AToM-FM project have shown that the brain compresses memories, storing what was surprising or emotionally significant and discarding redundant detail. This is why you remember the plot twist in a film better than the opening credits.

The hippocampus also plays a central role in imagination and future planning. Patients with bilateral hippocampal damage cannot only not form new memories, they struggle to imagine detailed future scenarios. Memory and imagination run on the same cognitive hardware.

Consolidation: Why Sleep Is a Startup Founder’s Secret Weapon

During slow-wave sleep, the hippocampus replays compressed versions of the day’s experiences and sends them to the neocortex for long-term storage. Research published in Nature Neuroscience demonstrated that synchronizing prefrontal stimulation with hippocampal slow waves during sleep measurably improved next-day recognition memory in human subjects. Precise timing with natural brain oscillations enhanced the consolidation of declarative memory.

The practical implication for founders: the cognitive work you do to encode information matters enormously. Spaced repetition, emotional salience, and sleep all improve consolidation. Cramming before a pitch, then going to bed late, is a neurologically poor strategy.

Memory Is Reconstructive, Not Reproductive

Every time you recall a memory, the hippocampus retrieves a compressed gist from storage and the neocortex reconstructs the details based on your current knowledge and expectations. A computational neuroscience model from bioRxiv showed that this hippocampal-neocortical system works essentially like a retrieval-augmented generation (RAG) pipeline: the hippocampus holds compressed episode codes, the neocortex holds statistical world knowledge, and retrieval prompts the neocortex to reconstruct a plausible version of the original event.

This reconstruction is also why memory is fallible. You do not retrieve what happened. You generate what probably happened, filtered through everything you know now.

Three Types of Human Memory That AI Researchers Study

Understanding these categories is essential for evaluating AI memory tools:

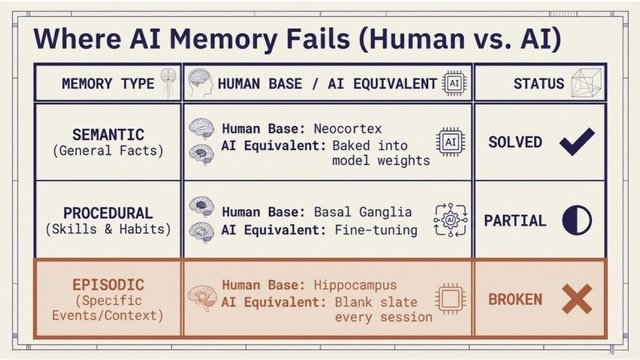

- Episodic memory: specific events tied to time and place (“I met that investor at WebSummit in Lisbon in 2023”). Hippocampus-dependent throughout life.

- Semantic memory: general facts and concepts, decoupled from context (“the Netherlands has a competitive ecosystem for deep-tech startups”). These migrate from hippocampus to neocortex over time.

- Procedural memory: skills and habits that become automatic, stored in the basal ganglia and cerebellum (“I know how to pitch without thinking about it”).

AI systems struggle most with episodic memory. They have reasonable semantic memory baked into their weights via training. Procedural memory is effectively what fine-tuning tries to produce.

AI Memory: The State of Research in 2026

The gap between what humans can do with memory and what AI systems can currently do is large, and it is actively shrinking. Here is where the research stands as of April 2026.

The Four Memory Problems AI Faces Right Now

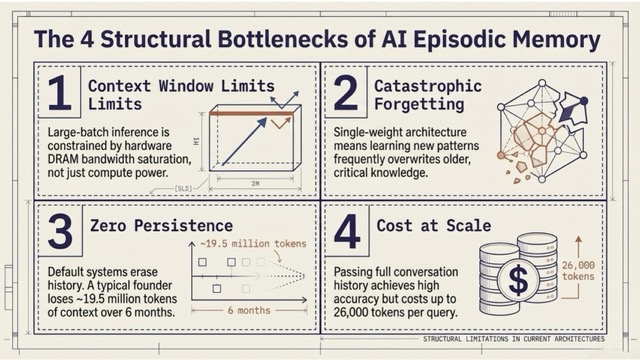

1. Context window limits. Every current large language model (LLM) has a finite context window, the working memory it can attend to in a single session. Even with models extending to hundreds of thousands of tokens, research from IBM presented at CLOUD 2025 found that large-batch inference remains constrained by DRAM bandwidth saturation, not compute. The memory hardware itself is the ceiling.

2. Catastrophic forgetting. When an AI model learns something new, it tends to overwrite previously learned patterns. This is the opposite of how the hippocampal-neocortical system works in humans, where the two-stage structure prevents new learning from destroying old knowledge. Research on machine memory intelligence from multiple labs has confirmed this as one of the three core bottlenecks in current AI architectures, alongside excessive compute requirements and weak logical reasoning.

3. No persistent episodic memory between sessions. By default, every LLM session starts blank. Six months of daily conversations with your AI tools produce, according to MemPalace’s documentation, roughly 19.5 million tokens of lost context. For a founder who uses AI to think through product decisions, draft customer communications, and track competitive research, this is a serious productivity drain.

4. The cost of memory at scale. The Mem0 research paper benchmarked ten different approaches to AI memory including passing the full conversation history in context, which is the most accurate approach and also the most expensive. A system that scores well on accuracy but requires 26,000 tokens per query is not production-viable for a bootstrapped startup.

The KV Cache: Where the Real Bottleneck Lives

Inside any LLM inference process, there is a data structure called the key-value (KV) cache. It stores the reasoning threads the model generates while answering a question. Research from the University of Edinburgh presented at NeurIPS 2025 introduced Dynamic Memory Sparsification (DMS), a technique that compresses the KV cache by selecting which tokens to retain. Even at one-eighth the original size, compressed models retained full accuracy on mathematics benchmarks and scored 12 points higher on AIME 24, the qualifier for the US Mathematical Olympiad.

The implication: smarter memory management does not just reduce cost. It actively improves reasoning quality.

Current Approaches to AI Memory Architecture

Researchers and engineers are attacking the problem from several angles simultaneously.

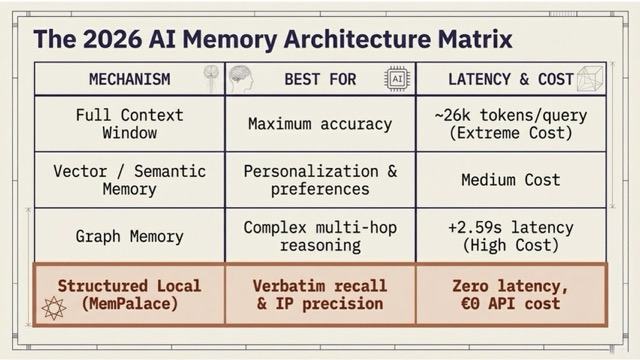

| Approach | How It Works | Best For | Cost |

|---|---|---|---|

| Full context window | Entire history in prompt | Maximum accuracy | Very high (~26k tokens/query) |

| Vector/semantic memory (e.g. Mem0) | Embed and retrieve relevant chunks | Personalization, preferences | Medium |

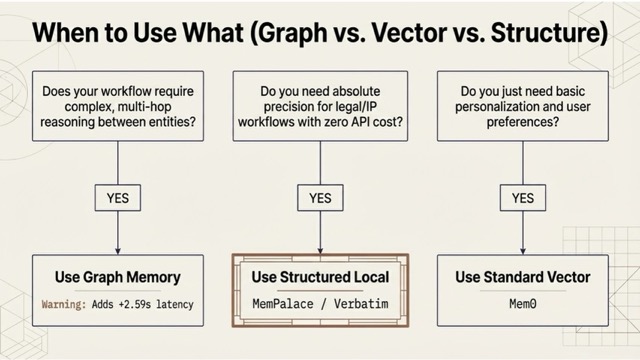

| Graph memory (e.g. Mem0g) | Stores relationships between entities | Complex multi-hop reasoning | Medium-high, +2.59s latency |

| Parametric memory (fine-tuning) | Bake knowledge into model weights | Stable domain knowledge | High upfront, low inference |

| Structured local memory (e.g. MemPalace) | Hierarchical local storage, zero LLM | Verbatim recall, zero API cost | Free |

| KV cache compression (DMS) | Token eviction with transfer delay | Faster reasoning at scale | Compute savings |

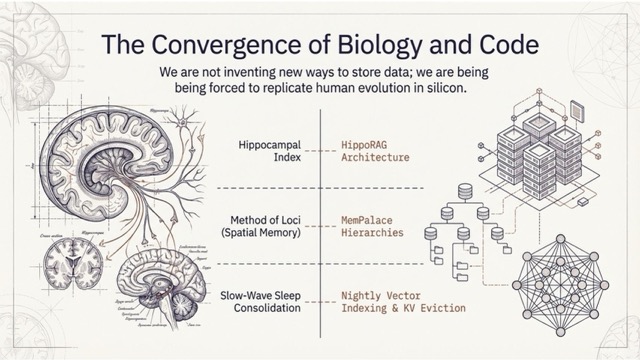

HippoRAG, a neurobiologically inspired architecture developed to mirror the hippocampal index system, takes inspiration directly from how the hippocampus links memories through associative connections. Rather than flat vector retrieval, it builds a graph of connected memory nodes, mimicking the CA3 region of the hippocampus, which generates novel associative patterns during recall.

What Graph Memory Adds

By early 2026, graph memory moved from experimental to production. The distinction between vector and graph memory is precise: vector memory retrieves semantically similar facts, while graph memory retrieves facts connected through relationships. For a startup managing a complex product with many interdependent components, graph memory means your AI can reason about how a change in one area affects another, rather than retrieving isolated facts. The tradeoff is a 2.59 second additional latency, which is acceptable for most non-real-time use cases.

Milla Jovovich Just Built the Most Talked-About AI Memory Tool of 2026. For Free.

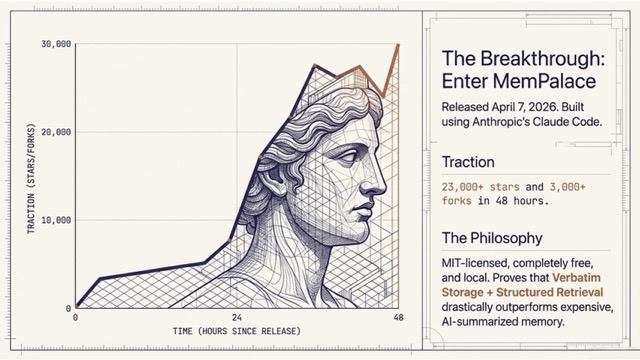

On April 7, 2026, actress Milla Jovovich pushed an open-source AI memory system called MemPalace to GitHub. Within two days, the repository had accumulated over 23,000 stars and nearly 3,000 forks, making it one of the fastest-spreading AI projects of the year.

The full breakdown of MemPalace and its benchmark controversy is on the Mean CEO blog, and it is worth reading before you install anything. Here is the short version.

Jovovich designed the concept and architecture. Developer Ben Sigman, CEO of Bitcoin lending platform Libre Labs, handled the engineering. They built it using Anthropic’s Claude Code. The project is MIT-licensed, free to use, and requires no API key to run.

The Method of Loci Applied to AI

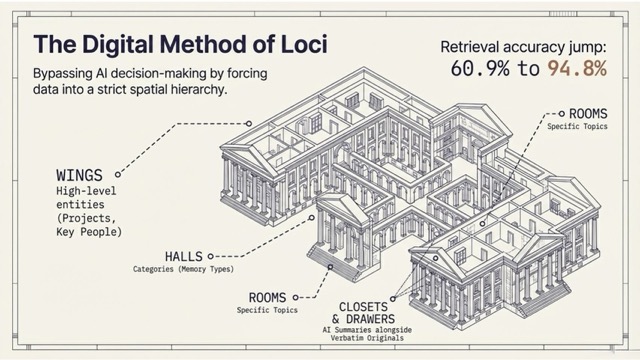

The architecture draws on the ancient Greek “method of loci,” the memory palace technique used by orators to memorize entire speeches by placing ideas in imagined rooms of a building. MemPalace applies the same logic to AI memory: conversations are organized into wings (people or projects), halls (memory types), and rooms (specific topics), with a closet for compressed summaries and drawers for verbatim originals.

The core design decision is deliberate: unlike Mem0 and Zep, which use an AI to decide what is worth remembering, MemPalace stores everything verbatim and uses semantic search to retrieve it later. No AI burns tokens deciding what matters. The structure itself does the organizing.

The result of just adding hierarchical structure over flat vector search: retrieval accuracy jumps from 60.9% to 94.8%, a 34-percentage-point improvement with no change to the underlying model.

The Benchmark Controversy

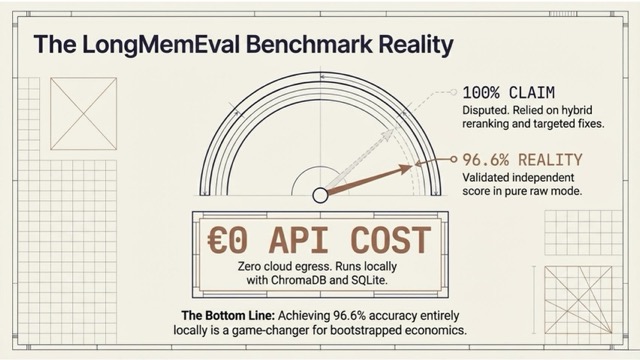

The claimed scores, 100% on LongMemEval and LoCoMo, triggered immediate scrutiny from the developer community. Independent reviewers found the 100% figure was achieved after targeted fixes on specific failing questions, a methodology issue. The revised number is 96.6% in raw mode (zero API cost) and 100% in hybrid mode with optional Haiku reranking.

Sean Ren, a USC professor of computer science and CEO of Sahara AI, reviewed the architecture and described it as a general approach that could scale across AI frameworks, while cautioning that results have not yet been validated outside controlled benchmark tests.

The takeaway for a bootstrapped founder: the benchmark debate is real, but the architecture is sound, the code is open, and the cost is zero. For European startups paying per token, this is worth evaluating on your own workload.

What MemPalace Costs vs. Alternatives

- Mem0: $19 to $249 per month

- Zep: $25 per month and up

- Letta: $20 to $200 per month for agent-managed memory

- MemPalace: free, local, MIT-licensed

Why AI Memory Research Matters Specifically to Bootstrapped European Startups

I am Violetta Bonenkamp, founder of CADChain, a deep-tech IP protection startup for CAD and 3D design files, and Fe/male Switch, an educational startup game for first-time female founders. I have bootstrapped both from the Netherlands and Malta, applied for EU grants, managed teams across time zones, and done all of it while keeping costs tight enough to survive.

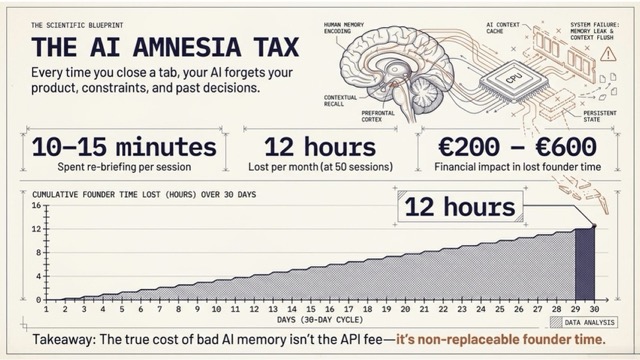

Here is what I know from experience: the real cost of bad AI memory is not the subscription fee you pay. The real cost is the time you spend re-explaining your context.

Every time you open a new session with your AI tool and re-describe your product, your users, your constraints, and your decisions from last week, you are paying with your most scarce resource: time. And in a bootstrapped startup, the founder’s time is the only thing that is genuinely non-replaceable.

The Case Study of Learn Dutch with AI

Learn Dutch with AI is a project built on AI-generated content and conversational language learning. When we analyzed how much time was lost re-establishing context across content creation sessions, the number was startling. Each new content session required roughly 10 to 15 minutes of re-briefing the AI on the pedagogical approach, the target learner profile, and the specific grammar patterns covered so far.

With persistent memory or a structured local memory system like MemPalace, that re-briefing collapses to seconds. Across 50 content sessions per month, that is between 8 and 12 hours of recovered founder time. For a solo operator, that is more than a full working day.

The Healthy Restaurants in Malta Case

Healthy Restaurants in Malta is another project where AI plays a central role in content production and SEO. The editorial voice, the specific language around Mediterranean diet principles, the recurring restaurant profiles, all of these require consistent context across sessions. Without memory persistence, every new content session risks producing material that conflicts with what was published three weeks ago. With a memory layer, the AI effectively operates with institutional knowledge of the project.

This is not a minor efficiency gain. For a lean content operation, consistency is product quality.

Fe/male Switch and the Neuroscience Connection

At Fe/male Switch, we built our “gamepreneurship” methodology on research from developmental psychology, neuroscience, and cognitive linguistics. The same neuroscience principles that govern human memory consolidation, encoding through emotional salience, reinforcement through spaced repetition, transfer through varied context, are the principles that make the game’s learning mechanics work.

When I look at the current AI memory research, what strikes me is how closely the best architectures mirror what we already knew about human learning. HippoRAG mirrors the hippocampal index. MemPalace mirrors the method of loci. The convergence is not accidental. The brains that built AI were shaped by the same evolutionary pressures that shaped the memory systems they are now trying to replicate.

The Practical SOP: How to Implement AI Memory in a Bootstrapped Startup Today

Here is a step-by-step process you can follow without a large engineering team and without a large budget.

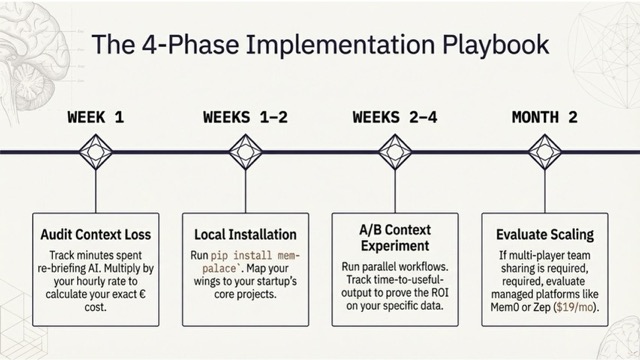

Phase 1: Audit Your Current Context Loss (Week 1)

Track every AI session this week. Note how many minutes you spend re-establishing context at the start of each session. Multiply by your hourly rate. That number is your monthly cost of AI amnesia.

For most solo founders using AI tools daily, this number falls between 200 and 600 euros per month in lost time equivalent.

Phase 2: Install MemPalace Locally (Week 1-2)

Install via pip: pip install mem-palace. The system runs entirely on your local machine using ChromaDB and SQLite. Two dependencies, no API key, no ongoing cost.

Set up your initial wing structure to match your startup’s architecture: one wing per project, one wing per key team member or client, one wing for competitive intelligence, one wing for your own decisions and reasoning.

Phase 3: Run a Context Experiment (Weeks 2-4)

Run two parallel workflows: one with MemPalace, one without. Track the time-to-useful-output for each session. If the structured memory approach saves you three or more minutes per session on average, the architecture is working for your use case.

Watch for the benchmark caveat noted by USC’s Sean Ren: controlled benchmark performance does not always translate to real-world improvement on non-standard queries. Test on your actual work, not on synthetic tasks.

Phase 4: Evaluate Managed Memory for Production (Month 2)

If you need memory across team members, not just on a single machine, evaluate Mem0’s managed platform or Zep. The pricing scales from $19 per month. For a three-person team sharing AI context on a shared product, the cost per person is lower than a single specialty coffee per day.

For privacy-sensitive projects, specifically anything involving client data, IP, or confidential business logic, the local-first architecture of MemPalace has a structural advantage. Your data never leaves your machine.

Mistakes to Avoid

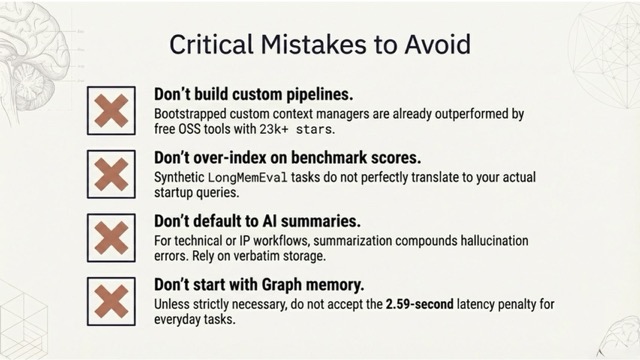

Do not start with graph memory. Unless your use case genuinely involves complex entity relationships, such as a multi-client CRM or a technical system with many interdependent components, the additional latency of graph memory (2.59 seconds per query) is not worth the modest accuracy gain for everyday tasks.

Do not build a custom memory system from scratch. Several founders I know through Fe/male Switch have spent weeks building bespoke context management pipelines that are now outperformed by a free, open-source tool with 23,000 GitHub stars. The OSS ecosystem is ahead of most custom solutions.

Do not over-index on benchmark scores. The MemPalace benchmark controversy illustrates a wider problem in AI tooling: reported scores often rely on controlled conditions that differ from your actual queries. Always test on your own data before committing.

Do not forget to audit quality, not just speed. Memory systems that summarize rather than store verbatim can introduce errors that accumulate over time. For any use case where precision matters (legal, technical, financial), verbatim storage is safer than AI-generated summaries.

Key Questions About AI Memory for Startups: What Founders Ask Most

What is AI memory and why does it matter for startups?

AI memory refers to a system’s ability to retain and retrieve information from past interactions. For startups, this matters because every AI tool session that starts without context forces the founder to re-establish background information manually. The time cost of this re-briefing, multiplied across dozens of weekly sessions, often exceeds the cost of the AI subscription itself. Memory-optimized workflows have been shown to reduce API costs by 30 to 60 percent while improving output consistency and quality.

How does human memory work, according to neuroscience?

Human memory operates through a two-stage system. The hippocampus rapidly encodes new experiences, including episodic events, emotional salience, and surprising details. During sleep, especially slow-wave sleep, the hippocampus replays these compressed representations and gradually transfers them to the neocortex for long-term storage. Retrieval is not a playback: it is a reconstruction using hippocampal cues and neocortical world knowledge. This means human memory is inherently associative, context-sensitive, and subject to distortion by current knowledge.

What is the difference between vector memory and graph memory in AI?

Vector memory stores text as numerical embeddings in a vector database and retrieves the most semantically similar entries when queried. It works well for personalization and preference recall. Graph memory stores facts as nodes connected by named relationships and enables multi-hop reasoning, such as “who worked on the project that depends on the system that was changed last Tuesday.” Graph memory scores better on complex relational queries but adds latency (around 2.59 seconds per query in Mem0g vs. Mem0). For most bootstrapped startups, vector memory is sufficient and lower cost.

What is MemPalace and is it really free?

MemPalace is an open-source AI memory system created by actress Milla Jovovich and developer Ben Sigman, released in April 2026. It is MIT-licensed, runs entirely locally using ChromaDB and SQLite, requires no API key, and costs nothing. It stores conversation data verbatim, organizes it into a hierarchical palace structure inspired by the ancient method of loci, and retrieves relevant context via semantic search. The claimed benchmark score of 100% on LongMemEval is disputed (the validated figure is 96.6% in raw mode), but the code is functional and independently reviewed. The full analysis is on the Mean CEO blog breakdown of MemPalace.

What is catastrophic forgetting in AI and how does it affect bootstrapped startups?

Catastrophic forgetting is the tendency of a neural network to overwrite previously learned patterns when it learns something new. Unlike human memory, which uses the hippocampal-neocortical architecture to separate fast new learning from slow stable storage, most standard LLMs store knowledge in a single set of weights. This means that fine-tuning a model on your company’s data risks degrading its general capabilities. For bootstrapped startups, this is relevant when evaluating whether to fine-tune vs. use retrieval-augmented generation (RAG) for domain-specific knowledge. RAG is generally safer and cheaper for small teams.

What is the method of loci and how does MemPalace use it?

The method of loci, also called the memory palace, is a mnemonic technique used by ancient Greek orators to memorize long speeches. It involves imagining a familiar physical space and placing vivid mental images at specific locations along a route. To recall the information, the speaker mentally walks the route and retrieves each image. Neuroscientists have confirmed that this technique dramatically improves recall by engaging the brain’s spatial memory pathways, specifically the hippocampal place cell system. MemPalace applies this logic to AI memory by organizing conversation data into wings, halls, rooms, and closets, a navigable hierarchy rather than a flat search index. The structural organization alone improves retrieval accuracy by 34 percentage points over flat vector search.

How much does AI memory cost for a bootstrapped startup in Europe?

The options range from free to several hundred euros per month depending on scale. MemPalace: free, local, MIT-licensed. Mem0: $19 to $249 per month depending on the tier. Zep: $25 per month and up. Letta: $20 to $200 per month for agent-managed memory. For a solo founder or two-person team, the managed Mem0 plan at $19 per month delivers persistent cross-device memory, personalization, and graph capabilities that cover most startup use cases. For privacy-sensitive work with client data, MemPalace’s local-first architecture is zero cost and zero data egress.

Why do AI systems forget between sessions, and can this be fixed without expensive infrastructure?

The core issue is that LLMs do not have persistent storage by design. Each session loads the model weights (the baked-in knowledge) and a context window (the current conversation). When the session ends, the context is discarded. There is no mechanism to automatically carry episodic information forward. This can be addressed without expensive infrastructure by using a local vector database like ChromaDB to store conversation summaries or verbatim exchanges, then injecting relevant retrieved context at the start of each new session. This is exactly what MemPalace automates. The entire memory infrastructure runs on-device with no API calls, no cloud dependency, and no recurring cost.

What can startup founders learn from neuroscience research about using AI tools more effectively?

Several principles from human memory research apply directly. Emotional salience improves encoding: AI outputs you find surprising, counterintuitive, or directly useful will be better remembered and acted on than outputs you merely scan. Spaced repetition improves retention: reviewing key AI-generated insights a day later and a week later consolidates them better than reading them once. Sleep consolidation is real: decisions made under sleep deprivation rely on less consolidated memory, which means the judgement quality is lower even if the information is technically available. And reconstruction bias matters: when you ask an AI to recall something from a past session, it will reconstruct based on statistical probability, not perfect retrieval. Verbatim storage, as in MemPalace, is the only architecture that avoids this reconstruction error.

What are the most promising directions in AI memory research for 2026 and beyond?

The most active areas are: neurobiologically inspired architectures such as HippoRAG that mirror hippocampal-neocortical dynamics; KV cache compression techniques like Dynamic Memory Sparsification that reduce memory requirements while improving reasoning quality; multi-agent collaborative memory that allows different AI specialists to maintain separate memory diaries and share selectively; and self-evolving memory systems that identify their own performance gaps and adapt without human-defined rules. For bootstrapped startups, the practical frontier is the combination of local-first verbatim storage with structured hierarchical retrieval, which MemPalace demonstrates can be built with minimal dependencies and zero ongoing cost. The research trajectory suggests that within 18 months, persistent cross-session memory will be a standard feature of major AI platforms, making current manual memory management workflows a temporary necessity.

What to Do Next

The gap between human memory capability and AI memory capability is closing, but the closing is not automatic. Someone has to build the memory layer, and right now, that someone is you.

Start small: audit one week of AI sessions, calculate your context re-briefing cost, and install MemPalace on your machine. Run the experiment in parallel with your normal workflow for two weeks. If it saves you meaningful time, it becomes infrastructure. If it does not, you spent nothing.