I am going to say something that most AI commentators are too afraid to say: the biggest threat from AI to your business is you not understanding what AI actually is.

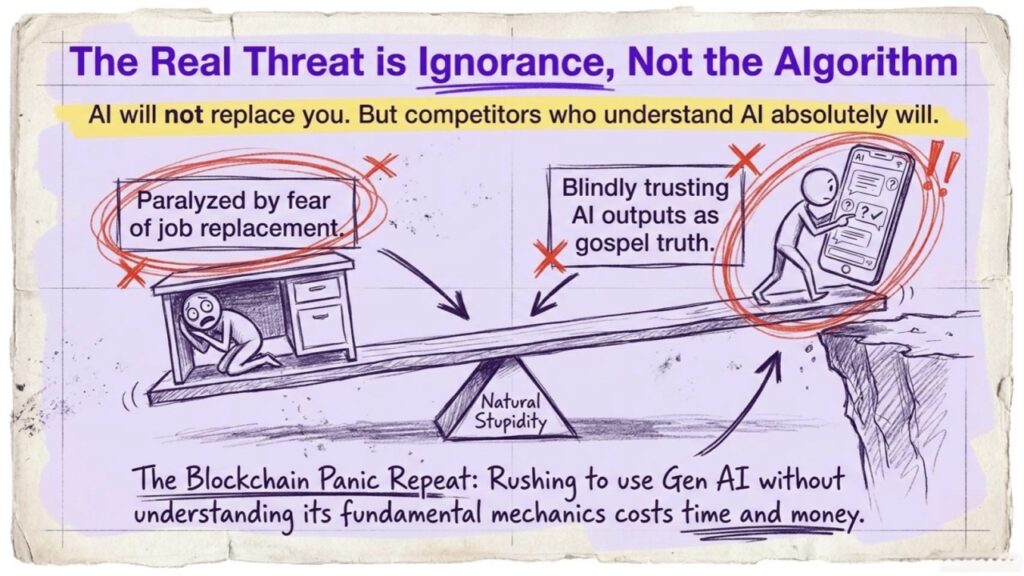

Every week, I talk to bootstrapping founders across Europe who are either paralyzed by fear of AI taking their jobs, or blindly trusting AI outputs as gospel truth. Both groups are making a catastrophic mistake. Both are letting “natural stupidity” beat them before the algorithm even gets a chance.

I built CADChain, a deep tech startup that uses blockchain to protect CAD intellectual property. I also bootstrapped Fe/male Switch, a startup game that trains women founders from zero to first customer, using AI characters like our AI entrepreneur Elona Musk and AI co-founders called PlayPals. And I run Learn Dutch with AI, which proves that a narrow, well-designed AI application can outperform expensive human tutoring at a fraction of the cost. So when I talk about AI, I am talking from real product decisions, real budgets, and real users, not from a conference stage with a slide deck.

Here is what I have learned: most of what people believe about Gen AI and LLMs is wrong. And that wrongness is costing European bootstrappers money every single day.

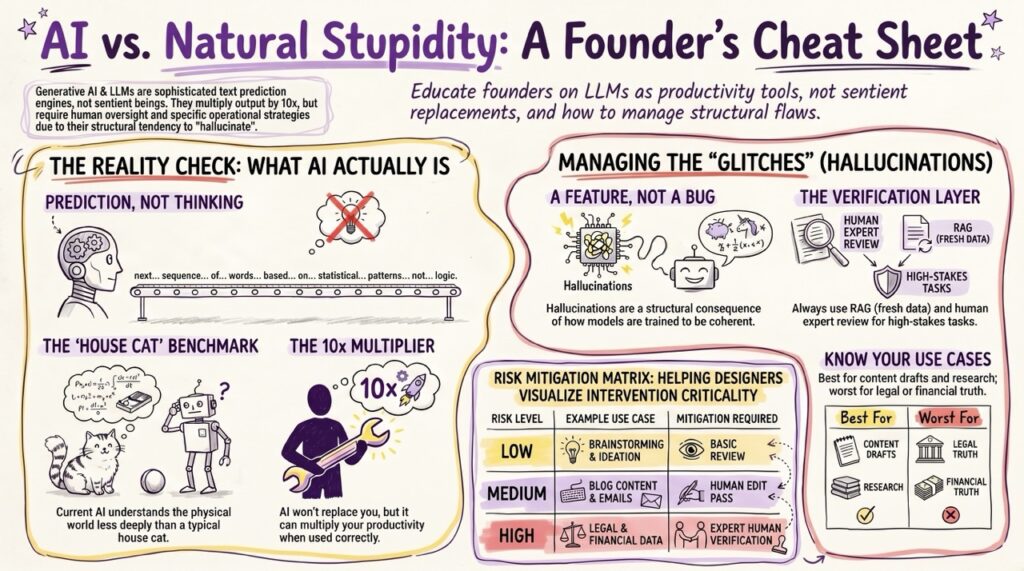

TL;DR: Generative AI and Large Language Models are sophisticated text prediction engines, not sentient beings. They cannot replace human judgment, but they can multiply your output by 10x when used correctly. Hallucinations are a structural feature of how LLMs are trained, not a bug to be patched, and they can be managed with the right workflows. The real risk for European founders is not that AI gets too smart. It is that your competitors from the USA and China learn to use AI well before you do. Keep reading for the exact playbook.

The AI Panic Is the New Blockchain Panic (And Just as Misplaced)

Remember 2017? Everyone was screaming about blockchain changing everything. Supply chains, voting systems, healthcare records, the entire financial system, all of it was going to be “put on the blockchain.” Founders rushed to add “blockchain-enabled” to their pitch decks. Investors threw money at anything with a distributed ledger. And then… most of it collapsed into nothing.

Why? Because almost nobody understood what blockchain actually does.

Blockchain does one specific thing well: it preserves a piece of data and lets you prove, cryptographically, that this data has not been changed since it was recorded. That is it. Blockchain does not verify that the underlying data is true. It verifies that the data was not tampered with. If you put a lie on a blockchain, you get a tamper-proof lie. This fundamental confusion cost the industry billions.

At CADChain, we built on exactly this principle: protect the integrity of CAD design files so engineers can prove their intellectual property was theirs at a specific point in time. Narrow application, real problem. Not “blockchain for everything.”

Gen AI is repeating this exact cycle. The technology is real and useful for specific things. The hysteria around it, on both ends, from “AI will replace all humans” to “AI is just a toy,” is as misplaced as the blockchain mania was.

Let’s break it down.

What Gen AI and LLMs Actually Are (Not What The Media Tells You)

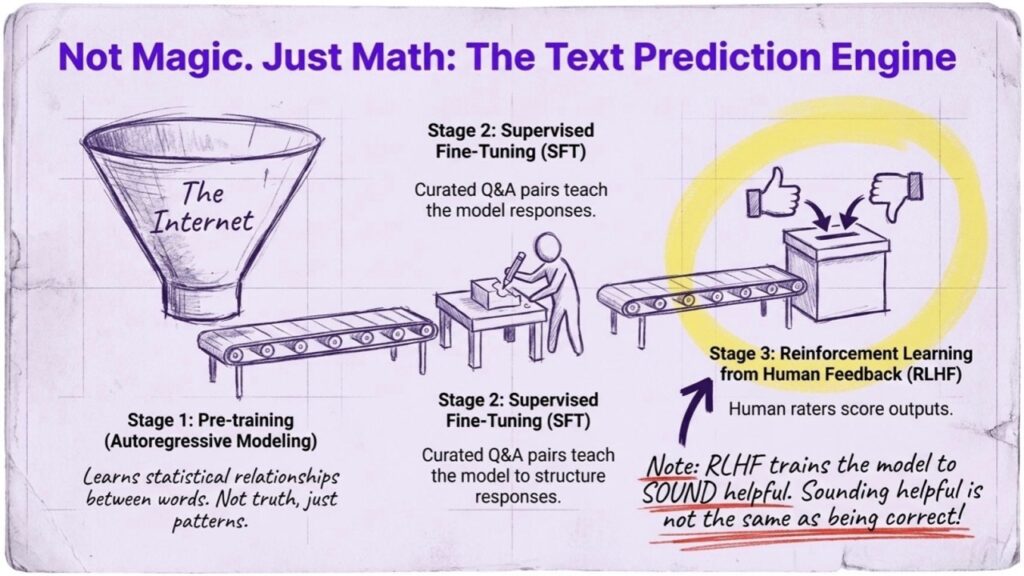

A Large Language Model (LLM) is, at its core, a very large statistical pattern-matching system trained on text. When you type a prompt into ChatGPT or Claude, the model does not “think” in any meaningful sense. It predicts what sequence of tokens (word-parts) is most likely to follow your input, based on patterns it learned from processing hundreds of billions of words.

The technical process looks like this:

Stage 1: Pre-training. The model reads an enormous corpus of text, essentially a large portion of the public internet, books, code repositories, scientific papers. It learns to predict the next word in a sequence. This is called autoregressive language modeling. The model is not told what is true or false. It learns statistical relationships between words and concepts.

Stage 2: Supervised Fine-Tuning (SFT). The raw pre-trained model is tuned on curated datasets of high-quality question-answer pairs, written by humans. This teaches the model to respond in a helpful, structured way rather than just completing text randomly.

Stage 3: Reinforcement Learning from Human Feedback (RLHF). This is the key stage that separates modern chat models from raw language models. Human raters score the model’s outputs on criteria like helpfulness, accuracy, and harmlessness. A separate “reward model” is trained on these scores. The LLM is then updated, using reinforcement learning, to generate outputs that would score highly with that reward model.

RLHF is why ChatGPT sounds confident and helpful even when it is wrong. The system was rewarded for sounding helpful. Sounding helpful is not the same as being correct.

Now here is what an LLM is NOT:

- It does not “know” things the way you know things. It has statistical associations.

- It has no continuous memory between sessions unless given tools for that.

- It cannot reliably reason about novel physical situations.

- It does not have goals, desires, or the capacity to plan against you.

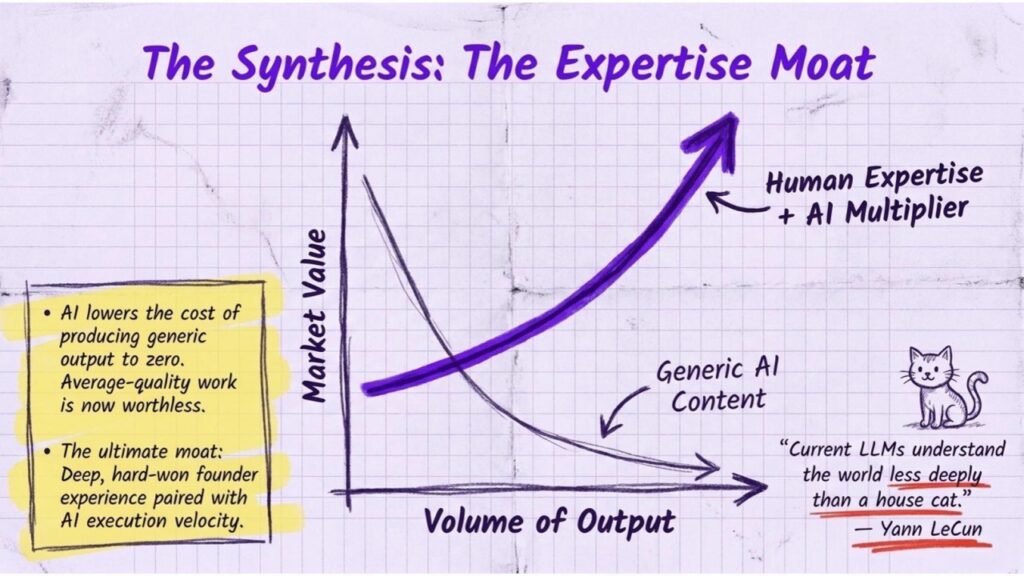

Yann LeCun, Meta’s Chief AI Scientist and one of the three “godfathers” of modern AI (he shared the Turing Award for pioneering deep learning), has been extraordinarily clear about this. He argues that current LLMs understand the world less deeply than a house cat. A cat can navigate a room, predict where a ball will roll, learn from a single bad experience. An LLM trained on 100,000 years’ worth of reading cannot. It has mastered the surface of language without grasping the physical reality underneath it.

LeCun’s exact framing: LLMs are “an off-ramp on the road to human-level AI.” Useful for specific tasks, a dead end if you are hoping for artificial general intelligence (AGI).

This is not pessimism. This is a precise technical argument from someone who helped build the foundations of the entire field.

Hallucinations Are Not a Bug. They Are the Architecture.

This is the part that most AI explainers get wrong, and getting it wrong is expensive for founders.

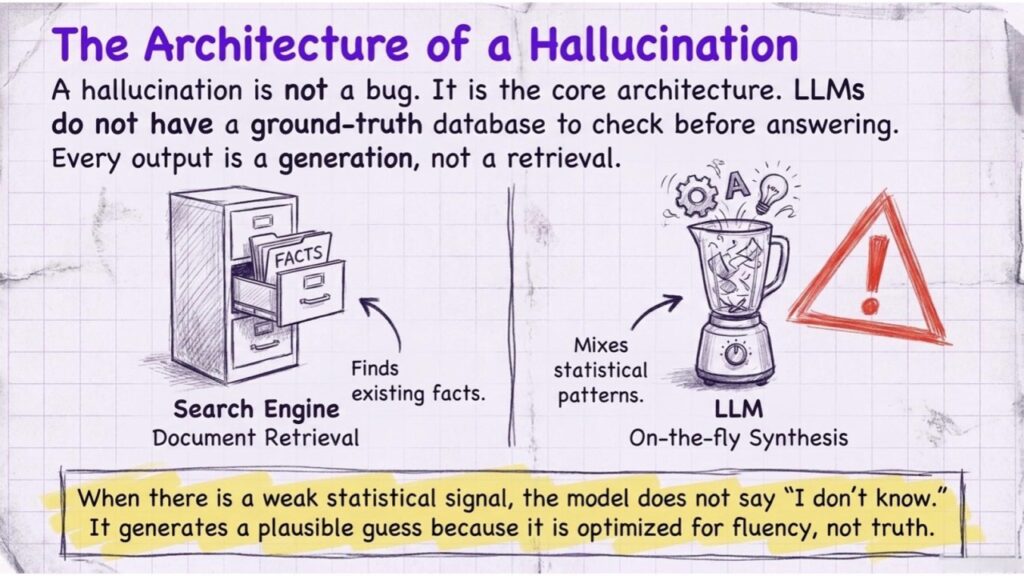

A hallucination in an LLM is when the model generates text that sounds confident and coherent but is factually incorrect. It might cite a paper that does not exist, invent a court case, or describe a product feature that was never built.

Most people call this a “bug” and assume it will be “fixed.” This framing is incorrect, and here is why.

Hallucinations are a direct consequence of how LLMs are trained.

Remember: the model’s job is to predict the most statistically plausible next token. It is not optimizing for truth. It is optimizing for coherence and fluency. When asked a question for which it has no reliable training signal, it does not say “I don’t know” by default. It generates what looks like a plausible answer, because that is exactly what it was trained to do.

RLHF helps, but only partially. Human raters can train the model to express uncertainty more often, and to refuse certain categories of questions. But the underlying architecture, predicting the next token based on statistical patterns, never changes. The model does not have access to a ground-truth database it checks before answering. Every output is a generation, not a retrieval.

Think of it this way: an LLM is not a search engine. A search engine looks up stored documents. An LLM synthesizes text on the fly. The synthesis can be wrong.

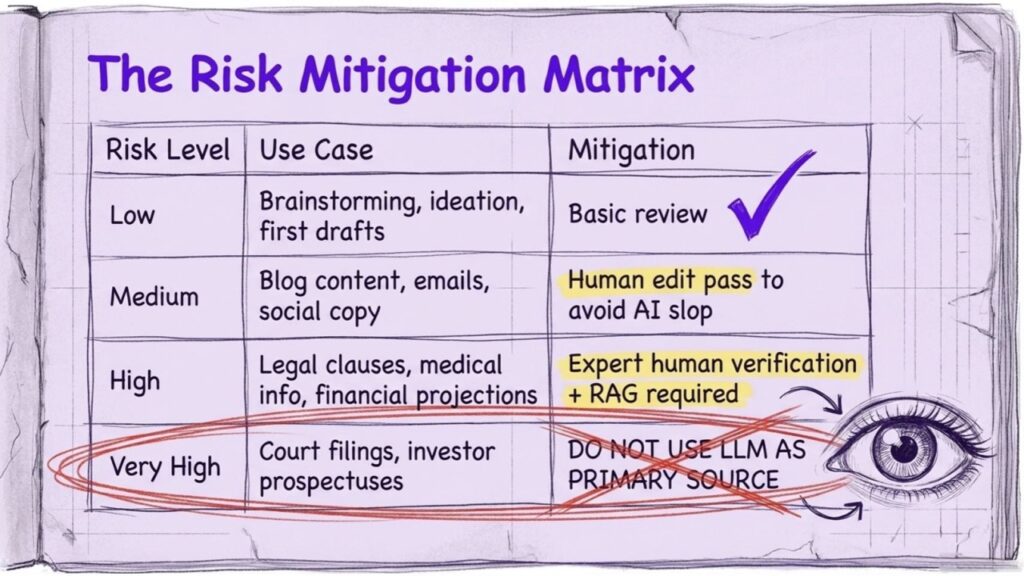

The practical takeaway for startup founders: never use an LLM as a source of truth for facts, citations, legal information, medical information, or financial data without verification.

Use it as a generator. Verify outputs with primary sources.

How to Actually Mitigate Hallucinations (A Practical Startup Playbook)

Since you cannot eliminate hallucinations architecturally (at least not with current LLMs), you manage them operationally. Here is how.

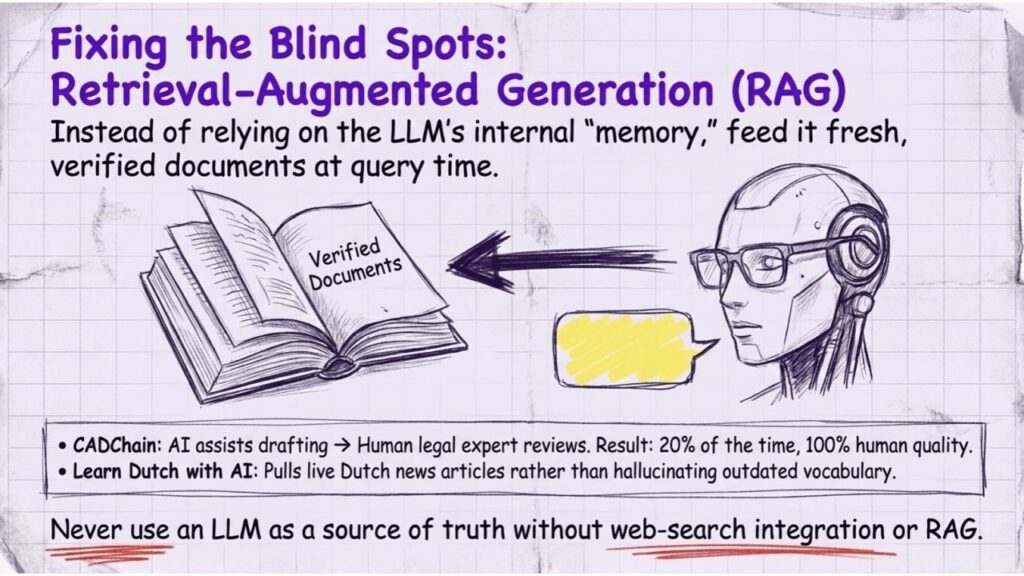

1. Retrieval-Augmented Generation (RAG)

Instead of relying on the LLM’s internal “memory,” you feed it fresh, verified documents at query time. The model then generates answers based on those documents rather than pure statistical pattern-matching. This dramatically reduces hallucination rate for factual queries. Most LLM APIs support this natively.

2. Web Search Integration

Modern LLM products, including ChatGPT with browsing, Claude with web search, and Perplexity, can pull live information from the web before generating a response. For anything time-sensitive or factual, use these modes. This is how our Learn Dutch with AI project keeps language content current. The AI tutors can pull real Dutch news articles as conversation practice material rather than hallucinating outdated phrases.

3. Structured Prompts with Constraints

Prompt engineering is real. A vague prompt produces a vague, often hallucination-prone answer. A structured prompt with explicit constraints produces more reliable output. Compare:

- Bad: “Tell me about GDPR compliance for startups.”

- Better: “List the 5 most important GDPR obligations for a SaaS startup with fewer than 250 employees operating in the EU. If you are uncertain about any item, say so explicitly. Do not cite specific cases unless you are confident they exist.”

4. Human Expert Review (Non-Negotiable for High-Stakes Outputs)

AI output for legal documents, medical content, investor materials, or anything with real liability must be reviewed by a qualified human. This is not optional. At CADChain, we combine AI-assisted document drafting with review by our legal expert Dirk-Jan. The AI drafts faster. The human catches errors. Together they produce output in 20% of the time it would take a human alone, with quality the human alone would match.

5. Temperature Settings and Explicit Uncertainty

If you are using the API directly, lower temperature settings (closer to 0) make outputs more deterministic and less likely to wander into confabulation. Prompt the model to say “I don’t know” or “I’m not certain” as explicit options. Models trained with RLHF can do this, but they need to be given permission.

| Hallucination Risk Level | Use Case Examples | Mitigation Required |

|---|---|---|

| Low | Brainstorming, ideation, first drafts | Basic review |

| Medium | Blog content, emails, social copy | Human edit pass |

| High | Legal clauses, medical info, financial projections | Expert human verification + RAG |

| Very High | Court filings, clinical decisions, investor prospectuses | Do not use LLM as primary source |

The Best Use Cases for AI in a Bootstrapped European Startup

Here is where AI genuinely saves you money and time. This is the list I actually use.

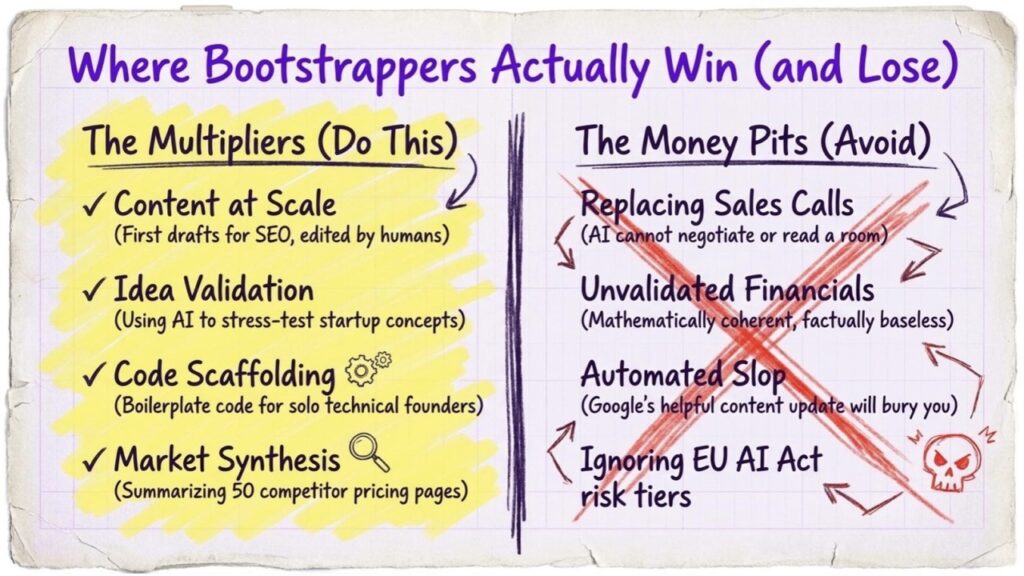

Content at scale. Writing blog posts, social media copy, email sequences, product descriptions. At Fe/male Switch, our AI character Elona Musk runs our SEO content strategy. We use LLMs to produce first drafts, which humans then edit for accuracy, voice, and factual correctness. Our publishing velocity is 10x what it would be with a human-only team on our budget.

Market research synthesis. Paste 10 competitor pricing pages into an LLM and ask it to identify patterns. Ask it to summarize 50 customer reviews. Ask it to list the most common objections in a specific market. The synthesis is fast, often useful, and cheap.

Code scaffolding and debugging. GitHub Copilot, Cursor, and similar tools are saving solo technical founders enormous amounts of time on boilerplate code, documentation, and bug identification. This is one of LeCun’s acknowledged strong suits for LLMs.

Idea validation. Our Fe/male Switch SANDBOX uses PlayPal, an AI co-founder, to help early-stage founders stress-test their startup ideas before spending money. PlayPal asks hard questions, runs through common failure scenarios, and generates market research starting points. It does not replace talking to real customers, but it radically improves the quality of the questions you go into those conversations with.

Language learning at scale. Learn Dutch with AI proves this case. Language practice with an AI tutor costs a fraction of a human tutor and is available 24/7. The AI can adapt to your level, correct your grammar in real time, and generate unlimited practice sentences on topics you actually care about. For expat founders in the Netherlands, this is a genuinely useful product.

Customer support drafts. Using LLMs to draft first-response templates for support tickets, which human agents then personalize and send, reduces response time and support staff workload without removing the human from the loop.

The Worst Use Cases for AI as a Bootstrapped Startup

And here is where founders waste money and embarrass themselves.

Replacing your sales process entirely. AI cannot build a relationship. It cannot read the room on a sales call. It cannot negotiate with nuance. Use AI to research the prospect, prepare your talking points, and draft follow-up emails. Show up yourself.

Generating financial projections without validation. LLMs will generate beautiful-looking financial models that are mathematically coherent and factually baseless. Numbers from an LLM need to be built on real data you input, not generated from thin air.

Legal advice. An LLM will confidently explain the law to you. It may be describing a law from a different jurisdiction, or a law that was amended, or a case that does not exist. Use AI to understand concepts and generate questions. Pay a lawyer for actual legal decisions.

Replacing expert technical judgment. If you are building something where being wrong costs lives or significant money, you need domain experts. AI is a research and drafting tool in these contexts, not an authority.

Fully automated content with no human review. Google’s Helpful Content systems are now specifically tuned to detect low-quality AI-generated content. Flooded markets with AI slop is a strategy that works for approximately six months before it collapses. Build for quality and longevity, especially if you are bootstrapping and cannot afford to rebuild your SEO from scratch.

Why You Should Not Fear AI (And What to Do Instead)

Let me be direct. The “AI will take all jobs” narrative is, at this moment in time, not supported by the technical reality of what LLMs can do.

LeCun’s argument is instructive here: current AI systems, including the most advanced LLMs available, cannot match the physical understanding of a cat. They cannot learn to drive a car with 20 hours of practice the way a 17-year-old can. They fail at tasks requiring genuine reasoning about novel situations.

What they can do is execute well-defined language tasks at enormous speed and low cost.

The humans most at risk from AI are not experts. They are people doing repetitive, well-defined language tasks with no particular expertise layered on top. The people least at risk are people with deep domain knowledge who learn to use AI as a production multiplier.

Here is the counterintuitive truth: AI raises the value of genuine expertise because it lowers the cost of producing generic output. When anyone can produce an average-quality blog post in 30 seconds, average-quality blog posts are worth nothing. But when you can produce genuinely expert content, backed by real experience, faster than anyone else, you have a substantial competitive edge.

That is exactly the philosophy behind everything we build at Fe/male Switch. Our content comes from real founders with real experience. The AI helps us produce it faster. The expertise is what makes it worth reading.

For European bootstrappers specifically: your runway is limited. Every hour you spend on tasks an AI can do adequately is an hour you are not spending on things only you can do. Revenue generation. Relationship building. Product decisions. Use AI to buy back your time for the things that actually matter.

A Founder’s SOP: How to Integrate AI Into Your Bootstrapped Startup Today

This is the exact workflow I recommend to founders in the Fe/male Switch game and to anyone who asks me directly.

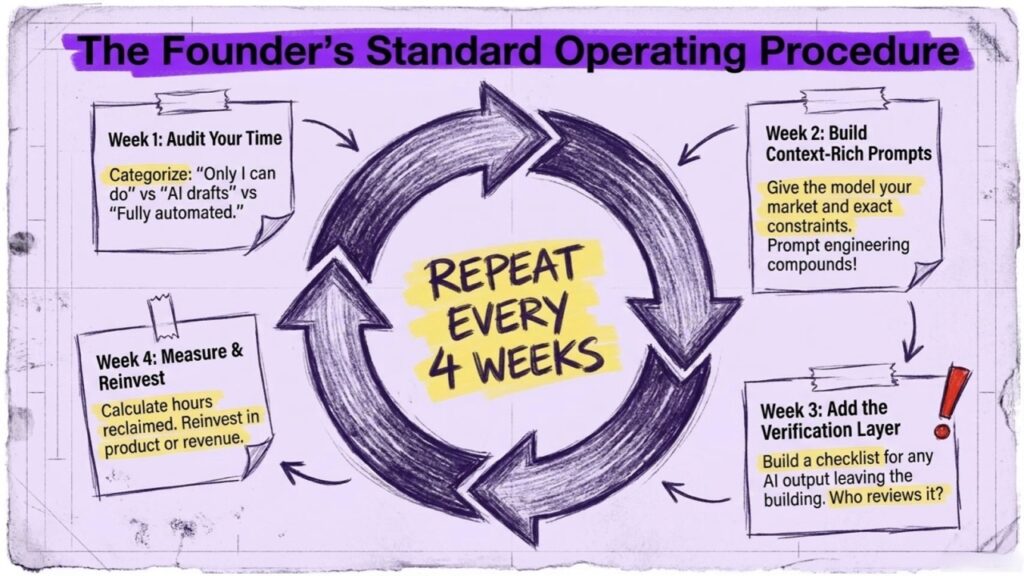

Week 1: Audit your time. List every task you do in a week. Mark each one: “Only I can do this” / “AI can draft, I refine” / “AI can do this fully with my oversight.” Most founders discover that 30-40% of their time falls in the second or third category.

Week 2: Pick one task category and build a prompt library. Start with content or customer communications. Write 5-10 standard prompts for recurring tasks. Test them. Refine them. A well-engineered prompt for your specific context is worth more than any AI tool subscription.

Week 3: Add a verification layer. For any AI output that goes to a customer, investor, or the public: build a checklist. What facts need to be verified? What tone does it need to hit? Who reviews it before it leaves the building?

Week 4: Measure the time saved and reinvest it. Calculate how many hours you reclaimed. Where did those hours go? If they went back into the highest-value activities in your business, the AI integration is working. If they disappeared into more AI tinkering, you have a productivity illusion, not a gain.

Ongoing: Stay calibrated. The AI landscape moves fast. Tools that are best today may not be best in six months. Schedule a monthly 30-minute review of what tools you are using and whether better options exist. Do not chase every new model release. Do stay informed.

AI Tools European Bootstrappers Are Actually Using in 2026

Not a comprehensive list. A curated one, based on what works at small budgets.

- ChatGPT / Claude / Gemini: General LLM tasks, drafting, research synthesis. Use whatever you can afford; all three are capable for most startup tasks. Avoid vendor lock-in.

- Perplexity AI: For AI-powered web research with citations, significantly better than LLMs alone for fact-finding.

- Cursor / GitHub Copilot: For solo technical founders, these are the highest ROI AI tools available right now.

- Midjourney / DALL-E: For visual content. Excellent for rapid prototyping of branding ideas. Not a replacement for a designer at final production quality.

- Whisper / Otter.ai: For transcription of calls, meetings, user interviews. Incredibly time-saving.

- Make (formerly Integromat) + LLM APIs: For building automated workflows with AI in the middle. This is where zero-code automation for startups becomes genuinely powerful for small teams.

Shocking Stats Every Founder Should Know

- According to Stanford’s 2025 AI Index, generative AI adoption among small businesses in Europe grew by 38% year-on-year, but fewer than 20% of adopters had any formal AI usage policy.

- McKinsey research found that generative AI could automate 60-70% of time employees currently spend on tasks, but this applies to specific task categories, not entire jobs.

- Goldman Sachs research suggests that fewer than 5% of occupations can be fully automated by AI. The rest involve partial automation of specific tasks.

- Hallucination rates in leading LLMs have dropped significantly with improved RLHF techniques, but as of 2025, even the best models hallucinate on complex factual queries at rates that make unreviewed AI output unsuitable for high-stakes decisions.

- The average European startup founder spends 23 hours per week on administrative and communication tasks that AI tools can significantly compress.

Mistakes to Avoid Right Now (Insider Tips From the Trenches)

Do not use AI to fake expertise you do not have. Your customers will notice. Your investors will notice. Depth comes from experience, not from better prompts.

Do not skip prompt engineering. The gap between a mediocre AI output and a great one is almost always in the prompt quality. Spend time on this. It compounds.

Do not assume the model knows your business. LLMs have no context about your specific market, your specific customers, or your specific competitive position unless you explicitly give them that context in the prompt. Give them the context. Every time.

Do not use AI-generated legal documents without lawyer review. This is especially important in Europe, where GDPR, IP law, and employment law are complex and jurisdiction-specific. A European founder friend of mine signed an AI-generated contractor agreement that had a clause that would have transferred all IP she ever created to the client. She caught it before signing. Do not rely on luck.

Do not build an AI dependency into customer-facing products without a fallback. AI API availability is not 100%. Build graceful degradation into anything customer-facing.

Do not ignore EU AI Act implications. If you are building with AI in Europe, the EU AI Act is now in force. Understanding the EU AI Act’s risk tiers is not optional for any startup building AI-powered products for European customers.

Real Projects, Real Results: How We Use AI at Fe/male Switch and Beyond

Elona Musk, our AI entrepreneur: Fe/male Switch features Elona Musk as an AI character who heads our SEO strategy and content creation. She is not a gimmick. She represents a real use case: AI producing consistent content output at a speed no small team could match with human writers alone. Every article goes through human review, but the draft velocity is transformational for a bootstrapped operation.

PlayPals, our AI co-founders: In the Fe/male Switch startup game, every player gets access to a PlayPal, an AI co-founder that guides them through idea validation, market research, and early startup decisions. PlayPal is not giving legal or financial advice. It is asking structured questions that help founders think more clearly. That is the right use case: AI as a thinking partner, not an authority.

Learn Dutch with AI: This project is a direct case study in narrow AI applications done well. Rather than building a general AI language teacher, we built a focused tool for people who specifically need Dutch for business or integration purposes in the Netherlands. The narrower the use case, the better the AI performs and the more genuine value it delivers to users.

These are not hypothetical case studies. These are running products with real users. The lesson in each case is the same: specific use case, human oversight, clear value to the end user.

FAQ

What is the difference between generative AI and traditional AI?

Traditional AI systems were built with explicit rules, decision trees, or narrowly trained models for specific tasks, like image classification or spam filtering. Generative AI (Gen AI) refers to AI systems that can produce new content, including text, images, audio, and code, by learning statistical patterns from massive datasets. Large Language Models (LLMs) like ChatGPT, Claude, and Gemini are a specific type of generative AI focused on text generation. The critical difference for founders: traditional AI does one thing predictably; generative AI does many things flexibly but less predictably.

Will AI replace human jobs completely?

The evidence says no, at least not in the near term and not at scale. Yann LeCun, Meta’s Chief AI Scientist and Turing Award winner, argues that current AI systems, including the most capable LLMs, understand the world less well than a cat does in physical terms. What AI will do is change the nature of many jobs by automating specific task categories within them. The jobs most affected are those involving repetitive, well-defined language or data tasks with no deep expert judgment required. Founders, specialists, and people with genuine domain expertise are at low risk of replacement and high opportunity to use AI as a multiplier.

What is RLHF and why does it matter for startup founders?

Reinforcement Learning from Human Feedback (RLHF) is the training technique that turns a raw language model into a helpful chat assistant. Human raters score AI outputs on helpfulness and accuracy, and those scores are used to update the model to produce outputs humans rate more highly. For founders, this matters because RLHF is why AI sounds confident even when wrong. The model was trained to sound helpful, not to be factually accurate. Understanding this helps you set realistic expectations and build proper verification workflows into any AI-assisted process.

Why do LLMs hallucinate and can it be fixed completely?

LLMs hallucinate because they are trained to predict plausible text, not to retrieve verified facts. When a model encounters a question for which its training data provides weak statistical signal, it still generates an answer because that is what it is optimized to do. RLHF reduces hallucination by training models to express uncertainty more often, but it cannot eliminate the structural cause. Retrieval-Augmented Generation (RAG), web search integration, and explicit prompt engineering to encourage “I don’t know” responses are the most effective mitigation strategies available today. Hallucinations cannot be completely eliminated with current architecture.

Is the EU AI Act relevant for small bootstrapped startups?

Yes, and many European founders are underestimating this. The EU AI Act creates risk tiers for AI systems. High-risk applications, including AI in hiring, credit scoring, education assessment, and some healthcare contexts, face significant compliance requirements. Even if you are not building in a high-risk category, if you are building AI-powered products for EU customers, you need to understand which tier you fall into and what disclosure and documentation obligations apply. Ignorance of the regulation is not a defense. Budget time to understand this before shipping, not after.

How should a bootstrapped startup with no AI budget start using AI?

Start with free and low-cost tiers. ChatGPT’s free tier, Claude’s free tier, and Google Gemini are all capable enough for most startup content and research tasks. The real investment is not money. It is time spent on prompt engineering. A well-written prompt for your specific use case, refined over 2-4 weeks of iteration, will produce dramatically better results than a poor prompt sent to the most expensive model available. Build a personal prompt library. Share it with your team. That library is a business asset.

What is the biggest mistake founders make with Gen AI?

Trusting unverified AI output in high-stakes contexts. This includes using AI-generated content without fact-checking, using AI-drafted legal clauses without lawyer review, presenting AI-generated financial projections without underlying verified data, and building customer-facing products that deliver AI output without any human oversight layer. The second biggest mistake is using AI to do things that require genuine expertise. AI can simulate expertise convincingly. Simulation is not the same as expertise, and the difference matters enormously when real decisions and real money are involved.

How does RAG (Retrieval-Augmented Generation) work and should my startup use it?

RAG is a technique that lets an LLM answer questions based on documents you provide, rather than relying solely on its pre-trained knowledge. You store your documents (product manuals, knowledge bases, research papers) in a vector database. When a user asks a question, the system retrieves the most relevant document chunks and feeds them into the LLM prompt alongside the question. The model then generates an answer grounded in your actual documents. Hallucination rates drop significantly. For any startup building an AI product that needs to answer questions accurately about a specific body of knowledge, RAG is worth implementing. Several no-code and low-code RAG tools now exist that make this accessible without a full engineering team.

How do I write better prompts for my specific startup context?

The single most important practice: give the model your context before asking for anything. Include who you are, who your customer is, what problem you solve, and what tone fits your brand. Then state the task with explicit constraints. Then tell the model what format you want the output in. Finally, tell it what to do when it is uncertain (say so, rather than guessing). Example structure: “You are writing for [company], which serves [customer] by solving [problem]. Tone: [tone]. Task: [task]. Constraints: [constraints]. If uncertain, say so explicitly. Format: [format].” Test this structure with 5-10 variations and keep the prompts that consistently produce the outputs you want.

Can AI help a bootstrapped European startup compete with better-funded competitors?

Yes, and this is one of the genuinely exciting aspects of the current moment. A solo founder or small team using AI tools well can produce content, code, and customer communications at a velocity that would have required a 5-10 person team five years ago. The speed and cost advantages are real. The important caveat: AI compresses execution time, not strategic thinking time. The advantage goes to founders who use AI to free up time for high-quality thinking, customer conversations, and product decisions, not to founders who use AI to avoid those things. Funded competitors will also be using AI. The edge goes to whoever uses it with more judgment.

The Bottom Line

The people who lose to AI are the ones who refuse to understand it. They either fear it so much they ignore it, or trust it so much they stop thinking.

Gen AI and LLMs are extraordinarily powerful tools for specific tasks. They are not sentient. They are not planning to take over. They are not replacing human judgment at the level that matters for building a real business. They are statistics engines that can produce impressive outputs when given good inputs.

Your job as a European bootstrapping founder is not to fear the tool. It is to understand it well enough to use it better than your competitors.

The natural stupidity this article is warning about is not yours specifically. It is the collective stupidity of treating AI as either a monster or a magic wand. Both attitudes produce bad outcomes. The rational position, backed by the actual science from people like Yann LeCun and grounded in real operational experience building products like Fe/male Switch, CADChain, and Learn Dutch with AI, is that AI is a tool with clear strengths and clear limits.

Learn the strengths. Respect the limits. Use it to do more of what matters.

That is the whole playbook. Everything else is noise.