Here is the thing: you are not just using AI to work faster. You are probably using it in ways that measurably reduce your ability to think, remember, and reason independently. And the people most at risk are not teenagers glued to TikTok. They are knowledge workers, founders, and entrepreneurs who use AI tools all day, every day, believing they are augmenting their intelligence.

The research does not fully agree with that belief. And in 2025 and 2026, that research got a lot more specific.

This article goes through what neuroscience actually says about how AI usage changes the human brain, which cognitive functions are at risk and which may benefit, what the MIT brain scan study really found (and what it did not), and what bootstrapped European startup founders should do about all of it before the damage becomes difficult to reverse.

TL;DR: Multiple peer-reviewed studies, including a 2025 MIT Media Lab experiment using EEG brain scans, show that heavy reliance on AI for cognitively demanding tasks measurably reduces brain connectivity, impairs memory encoding, and weakens critical thinking over time. The effect is real but conditional: it depends on when and how AI enters your workflow. Cognitive psychologist Barbara Oakley and colleagues at SSRN link rising AI dependency to a documented reversal of the Flynn Effect (the decades-long rise in IQ scores now going backward in developed nations). For bootstrapped founders whose primary competitive asset is their own judgment, this is not a theoretical concern. The good news: the neurological damage appears largely reversible when the right cognitive habits are maintained, and AI used after deep engagement, rather than instead of it, can still accelerate output without cost to cognition.

Your Brain Is Not a Passive Tool. It Is a Use-It-or-Lose-It System.

The most important thing neuroscience has learned about the brain in the last 30 years is neuroplasticity: the brain physically changes in response to experience. Neural connections that fire repeatedly grow stronger. Connections that go unused weaken and eventually prune. This is not metaphor. This is structural biology.

I have studied this concept in depth in relation to bilingualism in kids. Acquiring more than one language rewires your brain. It takes more effort for a kid to master two or more languages and bilinguals usually start speaking later than their monolingual peers, which by laymen may be considered as a lag in development. It is, in a sense, so, but only because the brain is working so much harder.

I am curious to see how AI fits into the neuroplasticity concept.

The hippocampus, the brain’s primary engine for forming new episodic memories and cognitive maps, physically grows larger in people who regularly challenge it with complex navigation or learning tasks. London taxi drivers, who must memorize thousands of streets in order to obtain their license, have measurably larger hippocampi than the general population. And a 2024 population-based study analyzing 8.9 million U.S. death records found that taxi and ambulance drivers, whose jobs require constant high-stakes spatial navigation, had the lowest Alzheimer’s-related mortality rates of 443 occupations studied. Bus drivers and airline pilots, who follow predictable, cognitively repetitive routes, showed no such protective effect.

The implication is direct: the cognitive demands you place on your brain every day shape its structure over time. And the cognitive demands you outsource to a machine, shape it too, only in the opposite direction.

Let’s break down what the research says is happening.

The Google Effect: Rehearsal for What AI Is Now Doing at Scale

The “Google Effect” was first documented in 2011 by Sparrow, Liu, and Wegner at Columbia University, who found that when people knew information was available via search, they were less likely to remember it and more likely to remember where to find it rather than what it contained. The brain, efficiently as always, stopped encoding information it had offloaded to an external system.

Search engines shifted our memory from content to location. We stopped remembering facts. We started remembering URLs.

That makes sense to me. I bet something similar happened when a calculator was introduced. We got more room for things other than using the bulk of our executive functioning on doing math.

A 2024 meta-analysis in Frontiers in Public Health confirmed this “Google Effect” at scale: intensive internet search behavior measurably changes how people retain information, reducing intrinsic memory formation in favor of external retrieval dependence. AI takes this several steps further. Search engines require you to evaluate and synthesize sources. AI delivers a synthesized answer. The cognitive work of comparison, evaluation, and integration, which is exactly the work that builds durable neural schemata, gets eliminated.

Here is the sequence the research describes:

- You outsource a cognitive task to AI.

- The brain reduces activation in the networks responsible for that task.

- Repeated outsourcing weakens those networks over time through synaptic pruning.

- When the AI is removed, the networks are measurably less capable than before.

This is basic neurobiology. And it’s not necessarily bad. To me it just means that now we have extra capacity to do something more fun with our brains.

The MIT Study: Brain Scans Don’t Lie

In June 2025, the MIT Media Lab released a study that went viral for a reason. Led by research scientist Nataliya Kos’myna, the “Your Brain on ChatGPT” experiment recruited 54 participants between ages 18 and 39 from five Boston-area universities and wired them with EEG (electroencephalography) headsets to monitor brain activity in real time during essay writing.

Participants were split into three groups over four months: one group used ChatGPT freely, one used Google search only, and one used nothing at all. In a fourth session, the groups switched.

The results were predictable if you understand neuroscience.

What the EEG Data Showed

The brain-only group consistently showed the highest alpha and beta wave connectivity across frontal-parietal and semantic networks, the brain’s executive command center for deep thinking, working memory, planning, and idea synthesis. The search engine group showed moderate connectivity. The ChatGPT group showed the lowest connectivity of all three, with reductions across cognitive networks that persisted across all four months of the study.

This should not come as a surprise, but I do not see anything negative here: isn’t it wonderful that there’s a tool that allows us to use fewer resources on a task? Remember the calculator? The point here is that humans need to use that freed capacity for something more demanding, like curing cancer.

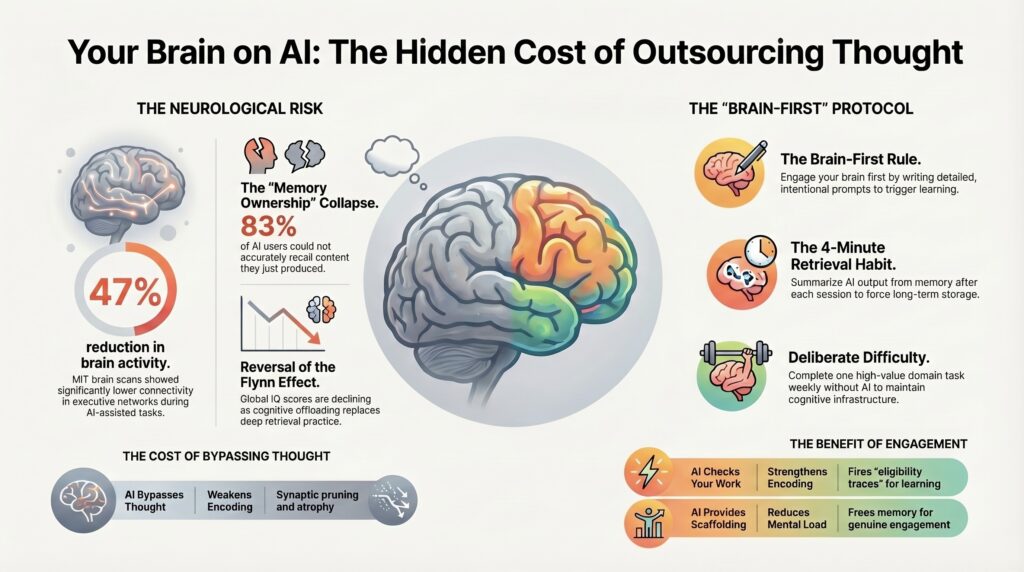

Expert commentary on the EEG data described what amounted to a 47% reduction in brain activity in the ChatGPT group compared to the control group. This is not a subtle effect. The MIT researchers described it as “the neurological signature of intellectual outsourcing in real time.”

And critically: when the LLM group was switched to the brain-only condition in session four, they did not recover immediately. Their brain connectivity remained lower than the brain-only group that had been training its networks for three months without AI. The effect lingered.

The reverse was also true. When the brain-only group was given access to ChatGPT in session four, their brain activity actually increased relative to the AI-only group. People who had trained their networks through demanding, unassisted cognitive work used AI more effectively, not more passively.

If you look at our brain as muscle, it makes sense that a warmed up muscle performs the exercise better.

The Memory Collapse Hidden in Plain Sight

There was another result from the MIT study that received less attention but may matter more for founders specifically. The ChatGPT group showed a notable failure of memory ownership: 83% of participants in the LLM group could not accurately quote key points from essays they had just finished writing minutes earlier.

They could not remember their own work. Duh, because it was not their work per se.

This is what the researchers called “a fading sense of ownership over cognitive output.” When the AI wrote the essay, the author’s brain did not encode it as its own memory. The information passed through without being consolidated. And without consolidation, there is no learning, no schema formation, and no durable knowledge.

The researchers were careful about the study’s limitations. The sample was small, participants were drawn from a narrow geographic area, and the study had not yet been peer-reviewed as of publication. The findings should be treated as preliminary, though the EEG methodology is well-established and the effect sizes were large enough to be meaningful.

The Memory Paradox: Why IQ Scores Are Now Going Backward

The MIT study documented short-term neurological change. In May 2025, cognitive psychologist Barbara Oakley and colleagues including Terrence Sejnowski published The Memory Paradox: Why Our Brains Need Knowledge in an Age of AI, which connects this neurological change to a much larger and older trend.

The Flynn Effect, named after researcher James Flynn, describes decades of rising IQ scores across the 20th century, roughly 3 IQ points per decade from the 1930s through the 1980s. Researchers attributed this rise to better nutrition, broader education, and increased exposure to abstract thinking.

Then scores started reversing. In the United States, Britain, France, and Norway, measured IQ has been declining since roughly the 1970s to 1990s depending on the country, with the steepest declines in fluid reasoning, the kind of flexible, novel problem-solving that is most relevant to startup founders navigating ambiguous markets.

Oakley and her co-authors argue that two intertwined causes explain the reversal:

- A shift in educational practice away from direct instruction, memorization, and retrieval practice toward “constructivist” models that assumed children learn best by discovering knowledge independently.

- The rise of cognitive offloading to digital tools, from calculators to smartphones to AI, which reduced the cognitive load required to complete tasks and therefore reduced the formation of durable internal schemata.

The neuroscience mechanism is specific. When the brain is actively retrieving information from memory, it does not merely reproduce that information. It strengthens the neural manifold associated with it, building richer, more interconnected representations that support flexible reasoning. This is why retrieval practice, the act of recalling information without looking it up, is one of the most robust findings in learning science: testing improves retention more than re-reading by a wide margin.

When AI eliminates that retrieval process, the manifold never forms properly. The brain retains access to the external source but not the internal architecture needed to reason from it.

Here is why this matters for your startup.

The Startup Brain Under Cognitive Offloading

I am Violetta Bonenkamp, founder of CADChain and Fe/male Switch, bootstrapped across the Netherlands and Malta over the last several years. My work on the “gamepreneurship” methodology draws directly from developmental psychology and cognitive neuroscience, so this research lands differently for me than for most.

And I am a big fan of foreign language acquisition methodologies, which led me to neuroscience. There’s so much we don’t know and that’s why it as as fascinating to me as startups. The thrill is real.

Here is what the data means in practice for founders who use AI every day.

The Judgment Problem

A founder’s most valuable asset is the quality of their judgment: the ability to read a market signal, synthesize competitor intelligence, evaluate a team member’s feedback, and make a fast, good decision under uncertainty. This judgment is the product of accumulated experience encoded as durable neural schemata through repeated retrieval and reflection.

If you outsource the cognitive work of synthesis and evaluation to AI before you do it yourself, those schemata do not form. You gain speed. You lose depth. And over months and years of daily AI dependency in exactly the mode the MIT study tested, your ability to reason from first principles without the AI crutch measurably weakens.

The Fe/male Switch Design Principle

At Fe/male Switch, we built the game to generate productive cognitive friction. Players must make startup decisions with incomplete information, under time pressure, with real feedback loops. The learning does not come from being told the right answer. It comes from making the wrong one, experiencing the consequence, and encoding the lesson through emotional salience and retrieval under pressure.

This is also the neuroscience argument for why Fe/male Switch works where passive startup courses often fail. The same principles that make our gamepreneurship methodology stick are the principles that protect your cognition when using AI: active generation, desirable difficulty, and retrieval before outsourcing.

GPS, Spatial Memory, and the Cautionary Tale That Already Played Out

The AI-cognition story already has a preview in GPS navigation, and the outcome was not good for the hippocampus.

A landmark study published in Scientific Reports assessed 50 regular drivers and found a significant negative correlation between lifetime GPS experience and hippocampal-dependent spatial memory strategies. Frequent GPS users formed worse cognitive maps, encoded fewer environmental landmarks, and performed significantly worse on unaided navigation tasks. And in a three-year longitudinal follow-up, greater GPS use between assessments correlated with steeper declines in spatial memory. The damage was progressive and ongoing.

The mechanism is the switch from allocentric navigation (hippocampal, map-based, flexible) to egocentric navigation (caudate-nucleus-based, turn-by-turn, rigid). GPS turns you from an explorer who builds a mental map into a passenger following instructions. The hippocampus stops being exercised. Over time, this reduced hippocampal engagement may increase vulnerability to age-related cognitive decline and Alzheimer’s risk, given that the hippocampus is among the first brain regions to atrophy in Alzheimer’s disease.

Now ask yourself what happens when AI becomes your GPS not just for navigation but for reasoning, writing, decision-making, and analysis.

The pattern is identical. Active cognitive engagement replaced by passive instruction-following. The brain’s relevant networks disengage. The atrophy begins.

Unless…

The Dual-Use Evidence: When AI Helps Cognition

This is not a simple story with one villain. And I am very excited about the other side of the research, because the cognitive offloading story would be incomplete without it.

Several studies document genuine cognitive benefits from well-designed AI use.

A systematic review in the Journal of Cognition, Emotion & Education found that AI-based visualization tools helped learners manipulate abstract concepts without exceeding working memory capacity, effectively reducing extraneous cognitive load while preserving intrinsic engagement with the material. AI systems that delivered immediate corrective feedback helped learners reallocate attention more effectively, strengthening consolidation of information.

The key phrase in that research: AI that aligns with cognitive architecture is beneficial. AI that bypasses it is harmful.

Here is the distinction the research supports:

| AI Use Pattern | Cognitive Effect | Why |

|---|---|---|

| AI completes task before you attempt it | Weakens encoding, reduces brain connectivity | No prediction error, no retrieval, no consolidation |

| AI checks your answer after your attempt | Strengthens encoding | Error correction fires eligibility traces in neural networks |

| AI provides scaffolding for novel material | Reduces extraneous load, aids comprehension | Frees working memory for genuine engagement |

| AI delivers answers without requiring synthesis | Bypasses schema formation | Passive consumption produces no durable learning |

| AI introduces desirable difficulty (harder retrieval) | Improves long-term retention | Bjork’s theory of desirable difficulties confirmed at scale |

| AI summarizes material you’ve already worked with | Aids review without damage | Consolidation supported rather than bypassed |

The key variable is cognitive sequence: whether your brain engages first, or the AI does. When you do the hard thinking, make the first attempt, generate the initial draft, or form the initial hypothesis, and then use AI to check, extend, or refine, the neural networks are exercised before being assisted. When you skip straight to AI output, they are not.

What Cognitive Offloading Really Means at the Neurochemistry Level

Let’s go one level deeper, because this is where the research gets genuinely surprising.

Every time the brain encodes new information, prediction errors drive the process. When you expect one thing and something different happens, the brain releases a signal (involving dopamine in the striatum and acetylcholine in the basal forebrain) that flags the mismatch as important and worth encoding. This process, called the prediction error signal, is one of the primary mechanisms by which new learning gets written into long-term memory.

The Memory Paradox paper by Oakley et al. describes how this mechanism depends on the brain having prior expectations to violate. You need an internal model to generate a prediction. And you need to have encoded previous knowledge to build that internal model. When AI replaces the initial engagement, no internal model forms, no prediction is generated, and therefore no prediction error drives encoding.

The brain literally has nothing to learn from. Not because the information is not there, but because the cognitive sequence that drives encoding never ran.

This is also why the MIT study’s most disturbing finding was not the reduced brain activity during AI use. It was the failure to re-engage when AI was removed. The brain-only networks had not been called upon enough to maintain their capacity. The cognitive debt had accumulated silently across months of daily use.

The Cognitive Debt Accumulation Model for Founders

Here is a framework to think about this at the startup level, because the stakes for founders are different from those for students.

What you use, you keep. What you outsource, you lose.

A student who outsources essay writing loses essay-writing skills. The question is: do we need that skill?

A founder who outsources strategic synthesis loses strategic synthesis skills. And strategic synthesis, the ability to hold multiple contradictory signals in working memory, find non-obvious patterns, and generate hypotheses that others have missed, is the cognitive function that justifies the founder’s existence in the first place.

Think about what most founders outsource to AI by default: first drafts of investor communications, market analysis, competitive comparisons, product positioning, legal document review, financial modeling explanations.

These are exactly the tasks that build the pattern recognition and schema network, but are they really what you want to spend your days doing?

The Five Cognitive Risks of Heavy AI Use, Ranked by Research Evidence

Based on the available peer-reviewed and preprint research as of April 2026, here is a ranking of the cognitive risks, from best-evidenced to more speculative.

1. Weakened episodic memory encoding for AI-assisted tasks (strong evidence)

The MIT study provides direct neuroimaging evidence. Work completed with heavy AI assistance is less well remembered by the person who nominally produced it. The memory trace is weak because the encoding process was bypassed.

2. Reduced functional brain connectivity in networks used for the offloaded task (moderate-strong evidence)

The EEG data from the MIT study shows reduced alpha and beta connectivity in frontal-parietal and semantic networks with sustained LLM use. Effect sizes were large, though the study was small and preliminary.

3. Hippocampal-dependent spatial and associative memory decline with GPS-type AI navigation (moderate evidence)

The Scientific Reports GPS study documents longitudinal decline over three years. The parallel to AI reasoning assistance is mechanistically plausible, though not yet directly tested for LLM use.

4. Schema atrophy and reduced fluid reasoning from chronic cognitive offloading (moderate evidence, theoretical linkage)

The Oakley et al. Memory Paradox paper makes the theoretical connection to the Flynn Effect reversal, linking it to reduced retrieval practice and schema formation. The IQ data is real and replicated. The causal attribution to AI specifically is plausible but involves inference across multiple bodies of evidence.

5. Metacognitive miscalibration: believing you understand more than you do (emerging evidence)

When AI produces polished, confident output, users tend to adopt it without deep verification. Over time, this pattern may reduce the habit of critical evaluation. Several studies document that students using AI rate their own understanding higher after AI-assisted learning but perform worse on follow-up tests. This “illusion of competence” is perhaps the most dangerous risk for founders making high-stakes decisions.

The SOP: How to Use AI Without Losing Your Brain

Here is a practical protocol, grounded in the research, that I use across my own work at CADChain, Fe/male Switch, and the Mean CEO blog.

The “Brain First” Rule

Before asking AI anything, spend enough time on writing the prompt that contains as many details as possible. This prompt writing creates the internal prediction the brain then updates when AI provides a different or better answer. Without that effort spent on prompt writing, no prediction error fires, and no learning is encoded.

I always spend a lot of time on the prompts. I build a lot of workflows and just mapping out the architecture fries my brain. Then come the tedious prompt improvements and testing. By the time the workflow is set up and tested I am cognitively drained. But I love it: this work is creative and I love doing it. What I hate it the repetitive stuff that comes after: now repeat the same workflow 100 or 1,000 times. That would drive me crazy and that’s where AI comes in: it does the tedious repetitive work according to my well thought-out process better than me.

The Retrieval Habit

At the end of each AI-assisted work session, close the AI tool and go through the output and write down the three most important things you learned or decided during the session. Do not look at the AI output while writing. This forces retrieval, which is the mechanism that converts working memory into long-term storage. It takes four minutes and it dramatically improves retention. That is of course if you need to retain that information, which in many cases we do not need. If you just need to send that boring bureaucratic report, then by all means, outsource it to AI and let the receiver use their cognitive functioning to the bream.

The Deliberate Difficulty Trigger

Identify one domain per week where you deliberately do not use AI because you enjoy the process. Write the first draft of that investor email without AI if that is what drives you. Solve that data problem if data is your thing. Do the first pass of that competitive analysis yourself to later use it as the prompt for the AI to see what you might have missed. This is not nostalgia for a harder way of working but the foundation of a stable mental health. It is maintenance of cognitive infrastructure that, once lost, takes months to rebuild.

The False Confidence Check

After any AI-generated output you plan to act on, ask yourself: “Can I explain the reasoning behind this in my own words, without looking at the AI output?” If not, the output is not yet yours. Your brain has not encoded it. It is a memory you borrowed but did not form.

Remember, that teaching is the best method of learning.

Let that sink in.

The Sleep and Consolidation Window

The research on sleep’s role in memory consolidation is unambiguous. Hippocampal replay during slow-wave sleep transfers compressed memory traces to the neocortex for long-term storage. Decisions, insights, and frameworks you engage with during the day need adequate sleep to consolidate. A founder who chronically undersleeps is running on a consolidation deficit, regardless of how sophisticated their AI tools are. The same goes for nutrition and physical health.

What the Research Says About the Reversibility Question

There is genuinely encouraging data here, because of neuroplasticity (remember it from the beginning of the article?). The MIT study found that when LLM users were forced to work without AI in session four, they showed reduced but not eliminated neural engagement. They were impaired relative to the brain-only group, but not cognitively incapable. The networks were still there. They had atrophied from disuse, not from structural damage.

The GPS research suggests a similar pattern: spatial memory can recover with re-engagement, though recovery takes consistent, sustained effort over weeks. The founding London taxi driver research demonstrated that hippocampal volume grew progressively across years of demanding spatial work. Growth follows demand.

The Oakley et al. paper argues for “cognitive complementarity” as the target: a deliberate marriage of strong internal knowledge and smart external tools, where the internal knowledge is built first and the external tool is used to extend and verify it, not to replace the building process.

This is the correct model. It is also harder to maintain than simply asking AI for everything. And it requires the kind of deliberate practice that the Fe/male Switch methodology is built on: structured, demanding, feedback-rich engagement that builds genuine internal competence before any tool is brought in to scale it.

Going to the gym takes effort, but it is worth it if you want a healthy body and a clear mind. Your brain also needs exercise, but it is up to you to decide what kind of training your brain gets. I outsource many things to AI so that I have more cognitive capacity to do things that matter to me.

Artificial Intelligence can make humans so much smarter if we don’t let Natural Stupidity get in the way.

FAQ: How AI Usage Affects Human Memory and Cognition

Does using AI every day actually make you less intelligent?

The evidence suggests it can, under specific conditions. The MIT Media Lab study found that heavy AI reliance for cognitively demanding tasks like essay writing measurably reduced brain connectivity over four months, weakened memory encoding, and impaired the ability to think independently when AI was removed. The Memory Paradox paper by Oakley and colleagues links chronic cognitive offloading to the reversal of the Flynn Effect, a decline in measured IQ scores across the US, UK, France, and Norway. The causal chain is not proven at the individual level, and the long-term research is still limited, but the mechanisms are well-grounded in cognitive neuroscience. The risk is real and proportional to how much AI replaces your own cognitive effort rather than extending it.

What is cognitive offloading and why does it matter for startup founders?

Cognitive offloading is the practice of delegating cognitive tasks to external tools, from writing notes to using a calculator to asking an AI to synthesize competitive intelligence. It is not inherently harmful: offloading routine, low-stakes tasks frees working memory for higher-order thinking. The problem arises when offloading replaces rather than supports the cognitive work that builds durable internal knowledge. For founders, the domains most at risk are those where judgment matters most: market analysis, strategic positioning, product decisions, and communication. When AI habitually completes these tasks on the founder’s behalf, the internal schemata that make that judgment trustworthy never fully form.

What did the MIT brain scan study on ChatGPT actually find?

The MIT Media Lab study, led by Nataliya Kos’myna, tracked 54 participants using EEG headsets across four months of essay writing under three conditions: ChatGPT-assisted, search-engine-assisted, and unaided. Participants using ChatGPT showed the lowest brain connectivity across frontal-parietal networks associated with executive function, working memory, and deep thinking. They also showed weaker memory retention, with 83% unable to accurately recall content from essays they had just written. When AI users were switched to unaided writing, their brain activity did not recover to the level of the unaided group. The study was preliminary and not yet peer-reviewed as of publication, and the sample was small. The researchers explicitly warned against reading it as proof that AI causes permanent cognitive damage. It does suggest, however, that habitual AI reliance for high-cognitive-load tasks carries measurable short-term neurological costs that persist after AI is removed.

Does GPS use cause the same kind of brain damage as AI use?

GPS does not cause brain damage in the pathological sense, but it does measurably reduce hippocampal-dependent spatial memory through the same mechanism: cognitive offloading of tasks the hippocampus would otherwise perform. A Scientific Reports study found that greater lifetime GPS experience correlated with worse spatial memory, lower cognitive mapping ability, and fewer landmarks encoded during navigation. A three-year longitudinal follow-up showed these effects deepened over time with continued GPS use. The hippocampal implications are worth taking seriously, since the hippocampus is also the first brain region to atrophy in Alzheimer’s disease, and spatial navigation is one of its primary maintenance functions. The parallel to AI use is mechanistically strong: passive instruction-following replaces active cognitive map-building, and the brain structures that depend on that activity gradually become less engaged.

Can the negative cognitive effects of AI use be reversed?

The available evidence suggests yes, at least partially and within the timeframes studied so far. The MIT study showed that LLM users who switched to unaided writing had lower connectivity than the trained brain-only group, but they were not cognitively disabled. The GPS research shows that spatial memory can recover with re-engagement, and the London taxi driver studies show that demanding spatial work grows hippocampal volume progressively over years. The key variable is sustained, demanding cognitive engagement without the AI crutch. Recovery appears to require weeks to months of consistent effort, not a single session. The practical implication: the longer the period of heavy AI reliance without deliberate cognitive maintenance, the longer the recovery period.

What is the Flynn Effect and how does AI relate to it?

The Flynn Effect is the observation that measured IQ scores rose steadily across much of the 20th century, at roughly three points per decade from the 1930s to the 1980s. Researchers attributed this rise to better nutrition, more formal education, and exposure to abstract reasoning tasks. Since the 1970s through the 1990s depending on the country, the effect has reversed in several developed nations including the US, UK, France, and Norway. Barbara Oakley and colleagues published the first neuroscience-based explanation in their 2025 paper, linking the reversal to two trends: pedagogical shifts away from retrieval-based learning, and the rise of cognitive offloading to digital tools. The causal chain is plausible and mechanistically coherent but involves inference across multiple independent data streams. IQ tests also measure specific skills that can change independently of general intelligence. The link to AI specifically is inferred from the broader offloading literature rather than directly measured.

How is cognitive offloading different from simply using tools?

The line between useful tool use and harmful cognitive offloading runs through the neural mechanism of encoding. Using a hammer does not replace a cognitive function your brain would otherwise perform. Using a calculator to verify arithmetic you have already done mentally trains the algorithm while checking your work. Using an AI to write a first draft you never attempted bypasses the prediction-error process entirely, and that process is the mechanism by which new cognitive structure is built. The question to ask about any AI-assisted task: does using AI here replace an internal process that would have built useful neural schemata, or does it extend a process I have already engaged with? The former is offloading. The latter is augmentation. The distinction matters enormously for whether you come out of a year of AI use sharper or duller.

What cognitive functions does AI use most threaten in bootstrapped founders specifically?

The three most at-risk cognitive functions for founders who rely heavily on AI are: strategic synthesis (the ability to hold multiple ambiguous signals in working memory and identify non-obvious patterns), critical evaluation (the ability to spot flaws, test assumptions, and push back on confident-sounding claims, including from AI), and domain expertise formation (the accumulation of pattern recognition through deep engagement with specific domains over time). These are also the three functions most frequently displaced by AI in daily startup workflows: market analysis, due diligence, and content production. Protecting them requires deliberate maintenance through the “Brain First” protocol and regular AI-free practice in your most critical domains.

Is it possible to use AI heavily without cognitive decline?

The research suggests yes, if the cognitive sequence is correct. The critical variable is whether your brain engages with the material before AI does. Users who built strong internal knowledge first (the brain-only group in the MIT study) and then used AI showed higher brain connectivity when using it, not lower. They used it more effectively and retained more from the interaction. The cognitive complementarity model proposed by Oakley et al. describes this explicitly: strong internal knowledge plus smart external tools is the target, not external tools instead of internal knowledge. For founders, this means investing in genuine expertise in your domain, doing the hard cognitive work yourself before bringing AI in to scale or refine it, and treating retrieval practice and deliberate difficulty as non-negotiable cognitive maintenance, just as physical training is non-negotiable for physical health.

What practical habits protect cognitive function while using AI daily?

The research supports five specific habits. First, the “Brain First” rule: always generate your own first attempt before asking AI anything, which creates the prediction the brain will update and encode. Second, end-of-session retrieval: write down the three most important things from each AI-assisted work session without looking at the output, forcing consolidation. Third, weekly deliberate difficulty: pick one important domain per week to work through entirely without AI, maintaining the relevant networks in active use. Fourth, the false confidence check: after any AI output you plan to act on, ask yourself whether you can explain the reasoning in your own words, and do not act until you can. Fifth, prioritize sleep: memory consolidation during slow-wave sleep is the biological mechanism that converts daily engagement into durable knowledge, and no AI tool compensates for a chronic consolidation deficit.

What This Means for You

The neuroscience is telling a consistent story across multiple independent research streams: the brain is a use-it-or-lose-it system, and AI is providing an unprecedented opportunity to stop using it without noticing.

That is not an argument against AI. It is an argument for using it with the same intentionality you would apply to any powerful tool that changes you as you use it.

As founders, our cognitive capacity is the product. The quality of our judgment, the depth of our pattern recognition, the speed of our synthesis under uncertainty: these are what we sell, even when we think we are selling software or services or experiences. Anything that erodes those capacities without our awareness is a liability, regardless of how much short-term productivity it appears to provide.

The research does not say stop using AI. It says: use AI after you think, not instead of thinking. Build internal schemata before you outsource their application. Treat your brain as infrastructure that requires maintenance, not as a resource that only needs to be pointed at AI tools to produce results.

The founders who will win over the next five years are the ones who build both: the deepest internal knowledge in their domain, and the best AI-augmented systems for scaling it. One without the other produces either a bottlenecked expert or an articulate, fast, and gradually emptying shell.

Train your brain first. Then build a second brain. Then you will be unstoppable.